Introduction

You have heard about artificial intelligence everywhere—from your phone’s facial recognition to chatbots that answer customer service queries. But have you ever wondered: how does AI actually work?

For many people, AI remains a mysterious black box. They know it can do impressive things, but the inner workings feel like magic. The truth is, AI is not magic—it is mathematics, data, and clever algorithms working together. And once you understand the basic principles, AI becomes far less intimidating and far more practical.

This article breaks down how AI works in simple terms, using real-life examples you encounter daily. Whether you are a complete beginner or someone who has read our previous guides and wants to go deeper, this explanation will give you a clear mental model of what happens when an AI system makes a decision, generates text, or recognizes your face.

For foundational concepts on what AI is and the types that exist, we recommend reading our companion guides: What is Artificial Intelligence? A Beginner’s Guide to Understanding AI in 2026 and Artificial Intelligence Definition and Examples for 2026 . This article builds on those foundations by explaining the how behind the what.

Throughout, we will reference insights from industry leaders like Google, Microsoft, and OpenAI, and show how MHTECHIN helps individuals and organizations understand and implement AI systems that work.

Section 1: The Core Idea—AI Learns from Examples

1.1 The Fundamental Shift: Programming vs. Learning

To understand how AI works, you must first understand a fundamental shift in how we build software.

Traditional programming works like this: a human writes explicit rules, and the computer follows them. For example, to build a spam filter the traditional way, you might write rules like:

- If an email contains the word “viagra,” mark as spam

- If an email has more than five exclamation points, mark as spam

- If the sender is not in your contacts, mark as spam

This approach works for simple problems. But it fails when rules become too complex to write by hand. How do you write rules to recognize a cat in a photo? What are the rules for understanding sarcasm? You cannot define them explicitly.

Artificial intelligence flips this model. Instead of writing rules, you feed the system examples and let it discover the rules itself. The system learns from data.

1.2 The Three Ingredients of AI

Every AI system, regardless of how sophisticated, relies on three core ingredients:

Data. AI learns from examples. The more high-quality examples, the better the system performs. For a face recognition system, you need thousands of labeled face images. For a language model, you need trillions of words from books, websites, and documents.

Algorithms. These are mathematical recipes that find patterns in data. Different algorithms work for different problems—some excel at finding patterns in images, others at understanding sequences like language or time-series data.

Computing Power. Training AI models requires enormous computational resources. Modern AI uses specialized hardware—graphics processing units (GPUs) and tensor processing units (TPUs)—that can process massive amounts of data in parallel.

1.3 Training vs. Inference: Two Phases of AI

AI systems operate in two distinct phases:

Training. This is the learning phase. The AI model processes vast amounts of data, adjusting internal parameters to get better at the task. Training can take days or weeks and requires significant computing power.

Inference. This is the using phase. Once trained, the model can make predictions on new, unseen data. Inference happens in milliseconds—this is what you experience when you ask ChatGPT a question or unlock your phone with your face.

Think of training as studying for an exam and inference as taking the exam. All the hard work happens during training; inference is fast and efficient.

Section 2: How Machine Learning Works—The Engine of AI

2.1 What Machine Learning Actually Does

Machine learning is the engine that powers modern AI. At its simplest, machine learning is the process of finding patterns in data and using those patterns to make predictions.

Imagine you are trying to predict house prices. You collect data on hundreds of houses: square footage, number of bedrooms, location, age, and the price they sold for. A machine learning algorithm analyzes this data and finds relationships—perhaps larger houses tend to sell for more, and location matters even more than size. The result is a model that can predict the price of a new house based on its features.

2.2 How a Machine Learning Model Learns

The learning process follows a consistent pattern:

Step 1: Start with random guesses. Initially, the model has no knowledge. It makes random predictions.

Step 2: Measure the error. The model compares its prediction to the actual answer and calculates how wrong it was. This is called the loss.

Step 3: Adjust slightly. The model makes tiny adjustments to its internal parameters to reduce the error.

Step 4: Repeat thousands or millions of times. The model iterates through this cycle repeatedly, gradually becoming more accurate.

This process is called gradient descent—think of it as a blindfolded person trying to find the bottom of a valley by feeling the slope beneath their feet and taking small steps downhill. Each step brings them closer to the lowest point.

2.3 Supervised, Unsupervised, and Reinforcement Learning

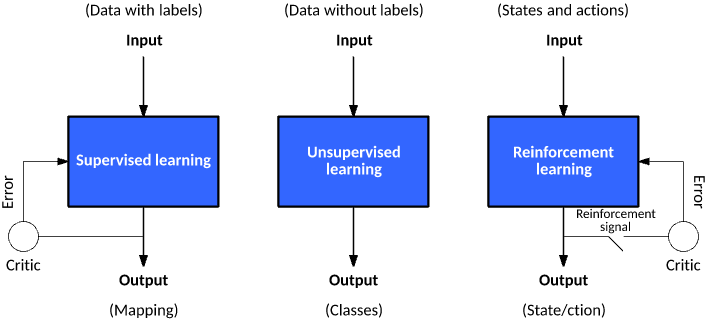

Machine learning comes in different flavors, depending on the type of data and the problem.

Supervised learning uses labeled data—examples where the answer is already known. Training a model to recognize cats in photos requires thousands of images labeled “cat” or “not cat.” This is the most common form of machine learning.

Unsupervised learning finds patterns in unlabeled data. The system looks for natural groupings or structures. Customer segmentation—grouping customers with similar buying habits—is a classic example.

Reinforcement learning learns through trial and error. The system takes actions in an environment and receives rewards or penalties. It learns to maximize reward over time. This is how AlphaGo learned to beat world champions at Go, and how autonomous systems learn to navigate complex environments.

Section 3: How Neural Networks and Deep Learning Work

3.1 The Brain-Inspired Architecture

Neural networks are the foundation of deep learning—the technology behind today’s most impressive AI achievements. They are loosely inspired by how neurons work in the human brain.

A neural network consists of layers of interconnected nodes (called neurons). Information flows through the network, with each neuron performing a simple calculation and passing its output to the next layer.

Input layer: Receives the raw data—pixels of an image, words in a sentence, numbers in a spreadsheet.

Hidden layers: Process the information. Each layer learns to recognize increasingly complex patterns. In an image recognition network, early layers might detect edges, middle layers detect shapes like eyes or wheels, and later layers detect complete objects like faces or cars.

Output layer: Produces the final result—a classification, a prediction, or generated text.

3.2 Why “Deep” Matters

Deep learning refers to neural networks with many hidden layers—sometimes dozens or even hundreds. The depth allows the network to learn hierarchical representations of data.

Consider a facial recognition system. The first layer might detect edges and simple patterns. The second layer combines edges into features like eyes and noses. The third layer assembles features into complete faces. Deeper layers learn increasingly abstract and subtle patterns, making the system more accurate and robust.

3.3 How a Neural Network Learns

When a neural network makes a prediction, the error is calculated at the output. Then, the network works backwards—layer by layer—to determine how much each neuron contributed to the error. Each neuron’s parameters are adjusted slightly to reduce future errors. This process is called backpropagation, and it is the mathematical engine that makes deep learning possible.

Section 4: How Large Language Models (LLMs) Work

4.1 The Simple Task Behind Smart Chatbots

Large language models—like ChatGPT, Gemini, and Claude—seem almost magical. They write essays, answer questions, and even generate code. But behind the scenes, they are doing something surprisingly simple: predicting the next word.

During training, an LLM processes trillions of words from books, websites, and documents. For each sequence of words, it learns to predict what word comes next. If the training text is “The cat sat on the…,” the model learns that “mat” is a likely completion.

Through this simple task, the model implicitly learns grammar, facts, reasoning patterns, and even style. By predicting the next word repeatedly, it learns to generate coherent paragraphs, answer questions, and follow instructions.

4.2 The Transformer Architecture

The breakthrough that made modern LLMs possible is the transformer architecture, introduced by Google researchers in 2017. Transformers introduced a mechanism called attention that allows the model to weigh the importance of different words when making predictions.

For example, in the sentence “She gave her dog a treat because it was hungry,” the word “it” refers to “dog,” not “she.” Attention mechanisms allow the model to track these relationships across long passages of text.

4.3 From Next-Word Prediction to Conversation

After initial training, LLMs undergo additional steps to become useful conversational agents:

Fine-tuning. The model is trained on instruction data—examples of prompts and desired responses. This teaches it to follow instructions rather than just predict text.

Reinforcement learning from human feedback (RLHF). Human evaluators rank model outputs, and the model learns to produce responses that humans prefer. This is what makes ChatGPT helpful, harmless, and honest.

Context management. When you chat with an LLM, the system maintains a conversation history. Each new query includes the previous exchanges, allowing the model to maintain context across turns.

Section 5: How Computer Vision Works

5.1 Seeing Like a Machine

Computer vision enables machines to interpret visual information. When you unlock your phone with facial recognition or when a self-driving car identifies pedestrians, computer vision is at work.

The challenge is immense. To a computer, an image is just a grid of numbers representing pixel colors. Recognizing objects requires finding patterns in these numbers.

5.2 Convolutional Neural Networks (CNNs)

The breakthrough for computer vision came with convolutional neural networks (CNNs) . These networks are designed to process grid-like data—like images—efficiently.

A CNN slides small filters across the image, detecting features like edges, corners, and textures. Early layers detect simple features, while deeper layers combine them into complex patterns. This hierarchical approach mirrors how the human visual system works.

5.3 How Facial Recognition Works

Facial recognition systems follow a sequence of steps:

First, the system detects faces in an image—finding the location of faces regardless of size or orientation. Then, it aligns the face, adjusting for tilt and rotation. Next, it extracts facial features, mapping the unique characteristics of the face—distances between eyes, shape of the jawline, contours of the nose. Finally, it creates a mathematical representation (called an embedding) that captures the unique features. When verifying identity, the system compares this embedding against stored representations of known faces.

Section 6: How Generative AI Creates New Content

6.1 Understanding Generative Models

Generative AI creates new content—text, images, audio, video—rather than just analyzing existing data. Instead of simply classifying or predicting, generative models learn the underlying patterns of their training data and use that knowledge to produce novel outputs.

Think of it this way: a discriminative model learns to distinguish between cats and dogs. A generative model learns what makes a cat a cat—and can then create new cat images that have never existed before.

6.2 How Image Generators Work

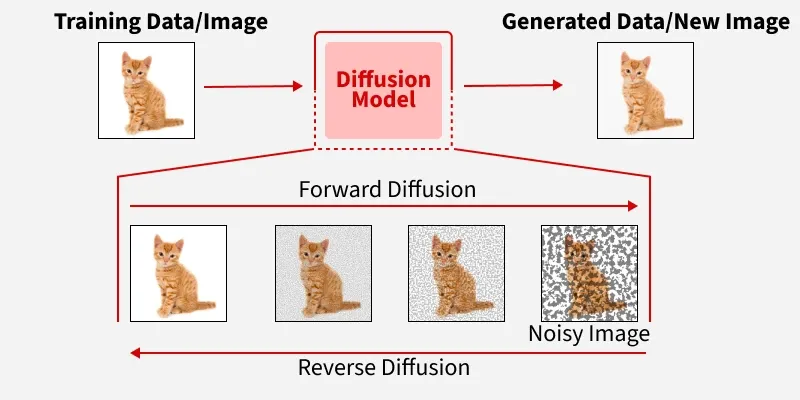

Image generation tools like Midjourney and DALL·E use a type of model called a diffusion model.

During training, the model learns to reverse a process of adding noise. The training data—millions of images—is gradually corrupted with random noise until it becomes pure static. The model learns to reverse this process: starting from random noise and gradually removing noise to form coherent images.

When you provide a text prompt, the model starts with random noise and iteratively refines it, guided by the text description. Each step brings the image closer to matching the prompt. The result is a completely new image that has never existed before.

6.3 How Code Generation Works

Code generation tools like GitHub Copilot are trained on billions of lines of code from public repositories. The model learns programming syntax, common patterns, and even the structure of entire projects.

When a developer starts typing, the model analyzes the context—the programming language, file structure, surrounding code, and comments—and predicts what code comes next. It can generate entire functions, write unit tests, and suggest bug fixes, dramatically accelerating development.

As OpenAI’s chief scientist Jakub Pachocki noted, technical staff now manage “groups of Codex agents” rather than editing code directly—a testament to how effectively these systems work.

Section 7: Real-Life Use Cases—How AI Works in Action

7.1 How Your Email Spam Filter Works

Every time you receive an email, your spam filter makes a decision. Here is how it works:

The filter has been trained on millions of emails labeled “spam” or “not spam.” It has learned subtle patterns—not just obvious keywords like “viagra,” but combinations of features: sender reputation, email structure, link patterns, and language style.

When a new email arrives, the model analyzes these features and calculates a probability that it is spam. If the probability exceeds a threshold, it goes to the spam folder. The system continues learning—if you mark a message as spam or move it to your inbox, that feedback is used to refine future predictions.

7.2 How Netflix Recommends What to Watch

Netflix’s recommendation engine works by analyzing patterns across millions of users. It looks at what you have watched, what you rated highly, when you watch, and how long you watch. It also considers what similar users enjoyed.

The system does not simply recommend popular content—it predicts what you will enjoy based on your unique patterns. The model learns complex relationships: perhaps fans of science fiction who also enjoy Korean dramas are likely to enjoy certain titles. These patterns are discovered automatically from data, not programmed by humans.

7.3 How Google Maps Predicts Traffic

When Google Maps shows you estimated travel time, it is using machine learning to predict traffic conditions.

The system combines real-time data from phones moving along roads with historical traffic patterns. It learns that certain roads are congested at specific times on specific days. It also factors in current conditions—accidents, weather, events—and predicts how traffic will evolve over your journey.

The model is constantly updated as new data arrives. If traffic clears faster than expected, the system adjusts its predictions for users still on the road.

7.4 How Self-Driving Cars See the World

A self-driving car combines multiple AI systems to understand its environment.

Cameras, radar, and lidar sensors capture data about the surroundings. Computer vision models detect other vehicles, pedestrians, lane markings, traffic signs, and obstacles. Separate models predict the future movement of other objects—where that pedestrian is likely to walk, whether that car will change lanes.

A planning model then determines the car’s actions: accelerate, brake, change lanes, or turn. The entire process happens in milliseconds, repeated dozens of times per second.

7.5 How Medical AI Predicts Missed Appointments

Healthcare systems like Deep Medical use AI to predict which patients are at risk of missing appointments.

The model is trained on historical appointment data, learning patterns associated with no-shows: previous attendance history, distance to the clinic, appointment type, time of day, socioeconomic factors, and even weather conditions on the appointment day.

When a new appointment is scheduled, the model calculates a risk score. High-risk appointments trigger proactive outreach—reminder calls, text messages, or offering alternative times. The result is a 50% reduction in missed appointments, unlocking thousands of additional care slots.

Section 8: How AI Learns to Improve Over Time

8.1 Continuous Learning

Many AI systems are not static. They continue learning after deployment, improving as they encounter new data.

A fraud detection system learns from new fraud patterns. A recommendation engine adapts to changing user tastes. A search engine improves as it sees what results users click.

This continuous learning loop is powerful: more usage leads to more data, which leads to better models, which leads to more usage.

8.2 Feedback Loops

Human feedback is critical for improvement. When you click “not interested” on a recommendation, that is feedback. When you thumbs-down a chatbot response, that is feedback. When a radiologist corrects an AI’s image analysis, that is feedback.

The most sophisticated AI systems use reinforcement learning from human feedback (RLHF) , where human evaluators rank model outputs, and the model learns to produce responses that align with human preferences. This is what makes modern chatbots helpful and aligned with user needs.

8.3 Transfer Learning

One of the most powerful techniques in AI is transfer learning: taking a model trained on one task and adapting it to another.

Large language models are the ultimate example. A model trained to predict the next word on internet text can be fine-tuned to write legal contracts, answer medical questions, or generate code—with relatively little additional training. This transfer of knowledge makes AI development far more efficient.

Section 9: How MHTECHIN Helps You Understand and Implement AI

Understanding how AI works is essential, but translating that understanding into real-world results requires expertise. MHTECHIN bridges the gap between theory and practice.

9.1 For Beginners: Demystifying AI Through Hands-On Learning

MHTECHIN’s AI/ML workshops take you beyond abstract explanations to practical implementation. You will not just learn how AI works—you will build systems that work.

The curriculum covers the fundamentals we have discussed in this guide: machine learning algorithms, neural network architectures, and training processes. But the focus is on hands-on projects. You will train models on real datasets, experiment with different algorithms, and see firsthand how adjustments affect performance.

For those who have read our beginner’s guides, these workshops provide the next step: moving from understanding to doing.

9.2 For Businesses: Implementing AI That Works

MHTECHIN helps organizations deploy AI systems that deliver measurable results. The approach begins with understanding your data, your use cases, and your infrastructure.

For businesses exploring AI, MHTECHIN conducts readiness assessments that evaluate data quality, infrastructure capacity, and organizational preparedness. For organizations ready to deploy, the team builds custom models—from predictive analytics to agentic systems—tailored to specific business challenges.

All deployments leverage cloud infrastructure from AWS, Azure, and Google Cloud, with security and compliance built in. Healthcare clients benefit from HIPAA-eligible deployments; financial services clients get audit trails and encryption standards.

9.3 The MHTECHIN Approach

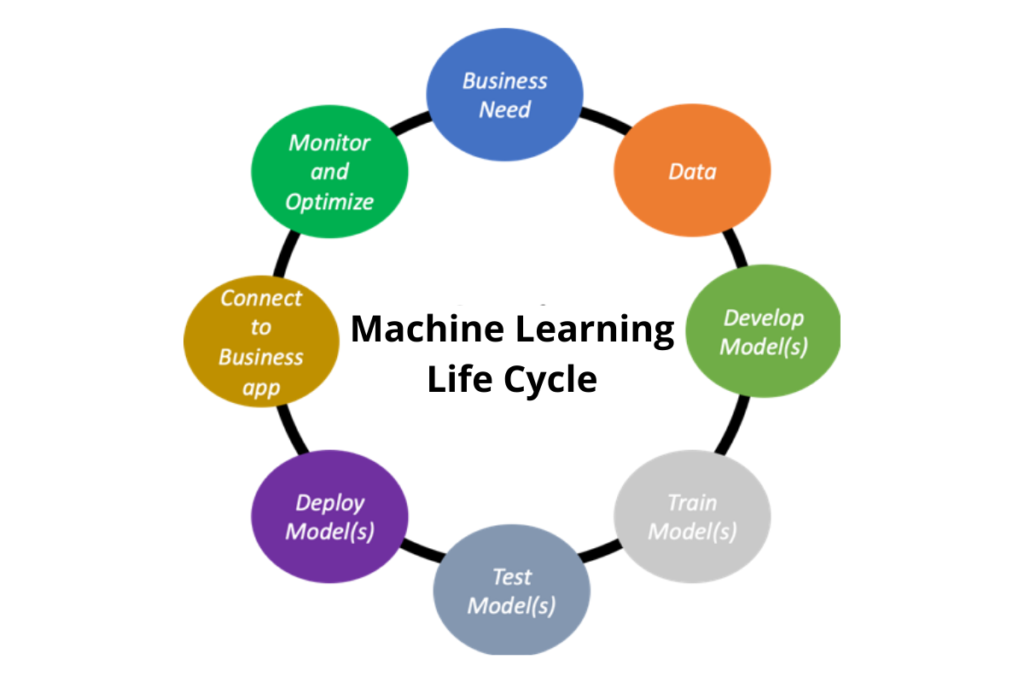

MHTECHIN’s expertise spans the entire AI lifecycle: from data preparation and model selection through training, evaluation, deployment, and continuous improvement. The team understands that AI is not a one-time project but an ongoing capability that requires monitoring, feedback, and refinement.

For individuals and organizations alike, MHTECHIN provides the guidance to move from curiosity to capability—turning the question “how does AI work?” into the answer “here is how we use it to achieve real results.”

Section 10: Frequently Asked Questions About How AI Works

10.1 Q: How does AI learn in simple terms?

A: AI learns by finding patterns in data. Instead of being programmed with explicit rules, an AI system is fed thousands or millions of examples. It starts with random guesses, measures how wrong it is, and makes tiny adjustments to improve. After repeating this process millions of times, it becomes highly accurate at making predictions on new, unseen data.

10.2 Q: Does AI think like a human?

A: No. AI systems process information in ways that are fundamentally different from human thinking. They find statistical patterns in data but do not have consciousness, understanding, or intent. When an AI generates text, it is predicting likely word sequences based on training data—not “thinking” about what it is saying.

10.3 Q: How do large language models like ChatGPT work?

A: LLMs are trained to predict the next word in a sequence. By processing trillions of words from books, websites, and documents, they learn grammar, facts, reasoning patterns, and style. After initial training, they undergo fine-tuning with instruction data and reinforcement learning from human feedback to become helpful conversational agents.

10.4 Q: How does facial recognition work?

A: Facial recognition systems use neural networks to detect faces, align them, extract unique facial features, and create a mathematical representation (embedding) that captures the person’s unique characteristics. When verifying identity, the system compares the embedding of the current face against stored embeddings of known faces.

10.5 Q: How do AI image generators create new images?

A: Modern image generators use diffusion models. During training, the model learns to reverse a process of adding noise to images. When generating a new image, it starts with random noise and iteratively refines it, guided by a text prompt, until a coherent image emerges. The result is a completely new image that has never existed before.

10.6 Q: Why do AI systems sometimes make mistakes?

A: AI systems make mistakes for several reasons. They may have been trained on incomplete or biased data. They may encounter situations not well represented in their training. Language models can hallucinate—generating confident but false information because they are predicting words, not retrieving facts. Human verification remains essential for important decisions.

10.7 Q: How does AI improve over time?

A: AI improves through continuous learning. Many systems collect feedback from users—clicks, ratings, corrections—and use that feedback to refine their models. Some systems are regularly retrained on new data. The most sophisticated systems use reinforcement learning from human feedback, where human evaluators rank outputs and the model learns to produce more helpful responses.

10.8 Q: How much data does AI need to learn?

A: It depends on the complexity of the task. A simple spam filter might need thousands of examples. A large language model requires trillions of words—the equivalent of reading the entire internet many times over. For custom business applications, MHTECHIN helps organizations determine the data requirements based on their specific use cases.

10.9 Q: Do I need to be a programmer to understand how AI works?

A: Not at all. While building AI systems requires programming skills, understanding how they work does not. The core concepts—learning from examples, finding patterns, making predictions—are accessible to anyone. MHTECHIN’s workshops are designed for both technical and non-technical learners who want to understand AI at a practical level.

10.10 Q: How can I start building AI systems myself?

A: Start with foundational learning. Free resources like Microsoft’s AI-900 Azure AI Fundamentals cover the basics. Then use free cloud resources like Azure for Students or Google Colab to build simple projects—a note summarizer, a chatbot, or a basic image classifier. For structured guidance, MHTECHIN offers hands-on workshops that take you from concept to working models. See our Beginner’s Guide to AI for a detailed roadmap.

Section 11: Conclusion—AI Is Not Magic, It Is Mathematics

Artificial intelligence can seem magical. It writes poetry, creates art, and drives cars. But behind the impressive capabilities is a straightforward principle: AI learns from examples.

The three ingredients—data, algorithms, and computing power—combine to create systems that can recognize patterns, make predictions, and generate new content. Training a model involves iterative improvement, starting with random guesses and making tiny adjustments until accuracy emerges. Inference applies that learned knowledge to new situations in milliseconds.

Understanding how AI works demystifies the technology. It reveals that AI is not a mysterious intelligence but a tool—one that is most powerful when used in partnership with human judgment, creativity, and oversight.

For individuals, the path forward is to build literacy and practical skills. For organizations, the opportunity is to deploy AI systems that solve real problems and deliver measurable ROI. In both cases, the foundation is the same: understanding the how behind the what.

Ready to move from understanding to doing? Explore MHTECHIN’s AI/ML workshops and enterprise implementation services at www.mhtechin.com. From foundational training to custom AI systems, our team helps you harness the power of artificial intelligence—not as magic, but as mathematics that works.

This guide is brought to you by MHTECHIN—transforming AI concepts into practical capability through expert training and enterprise implementation. For personalized guidance on AI learning paths or business AI strategy, reach out to the MHTECHIN team today.

Leave a Reply