Introduction

You submit a loan application. The bank’s AI system denies it. You ask why. The answer: “The model said no.” No explanation. No reasons. No way to understand what went wrong or how to fix it. This is the reality of black-box AI—models that make decisions without offering any insight into how or why those decisions were made.

For years, this trade-off was accepted: accuracy came at the cost of transparency. The most powerful AI models—deep neural networks with billions of parameters—were also the most inscrutable. You could not look inside and see why they made a particular prediction. They worked, but no one could explain how.

In 2026, this is no longer acceptable. Regulators are demanding explainability. Customers are demanding transparency. And organizations are realizing that trust—not just accuracy—is essential for AI adoption. This has given rise to Explainable AI (XAI) , a field focused on making AI models understandable to humans.

This article explains what Explainable AI is, why it matters, how it works, and how organizations can build transparency into their AI systems. Whether you are a business leader deploying AI, a compliance officer navigating regulations, or someone building foundational AI literacy, this guide will help you understand the shift from black-box to transparent AI.

For a foundational understanding of how AI models learn and make decisions, you may find our guide on Supervised vs Unsupervised vs Reinforcement Learning helpful as a starting point.

Throughout, we will highlight how MHTECHIN helps organizations build AI systems that are not only accurate but also explainable, auditable, and trustworthy.

Section 1: What Is Explainable AI (XAI)?

1.1 A Simple Definition

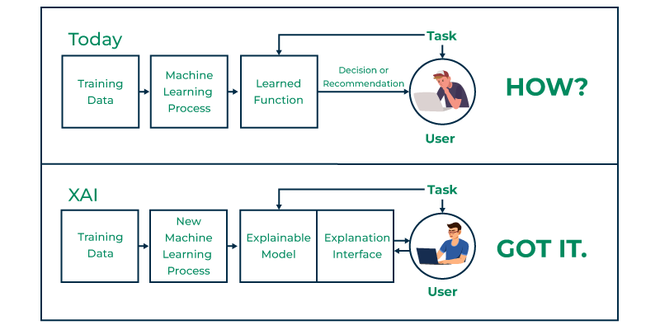

Explainable AI (XAI) is a set of methods and techniques that enable humans to understand and trust the decisions made by AI models. XAI answers the question: why did the AI make that particular decision?

The goal is to make AI systems transparent—to open the black box and reveal how inputs are transformed into outputs. This is not just about curiosity. It is about trust, accountability, compliance, and debugging.

1.2 The Black-Box Problem

Many of today’s most powerful AI models—especially deep neural networks—are black boxes. They take inputs, produce outputs, and in between, the process is opaque. You cannot look inside and see why a model decided that an image contained a cat, that a transaction was fraudulent, or that a loan applicant was high-risk.

This opacity creates problems:

- Trust. If you do not know why an AI made a decision, why should you trust it?

- Accountability. Who is responsible when an AI makes a harmful decision?

- Debugging. How do you fix a model when it makes mistakes?

- Compliance. How do you prove to regulators that your AI is fair and non-discriminatory?

Explainable AI addresses these problems by providing insights into model behavior.

1.3 Why Explainability Matters

Explainability is not just an academic nicety. It has become a business and regulatory imperative.

Regulatory compliance. In 2026, regulations like the EU’s AI Act, GDPR’s right to explanation, and sector-specific rules in finance and healthcare require that automated decisions be explainable. Organizations that cannot explain their AI face fines, legal liability, and reputational damage.

Trust and adoption. Users are more likely to adopt AI when they understand how it works. A doctor will not trust a diagnosis without understanding the reasoning. A loan officer will not approve a recommendation without seeing the factors.

Debugging and improvement. Explainability helps developers identify model flaws. If a model is relying on the wrong features—like background patterns rather than actual objects—explanations can reveal this and guide fixes.

Fairness and bias detection. Explanations can reveal whether a model is discriminating unfairly. If a credit model consistently gives lower scores based on zip code (a proxy for race), explanations will surface this.

Section 2: How Explainable AI Works

2.1 Intrinsic vs. Post-Hoc Explainability

There are two broad approaches to explainability:

Intrinsic explainability. Some models are inherently interpretable. Decision trees, linear regression, and logistic regression allow you to see exactly how inputs affect outputs. You can trace the decision path. These models are transparent by design.

Post-hoc explainability. For complex models like deep neural networks, we cannot look inside. Instead, we use techniques that approximate explanations after the model has made a decision. These techniques provide insights without requiring the model to be interpretable.

The choice depends on the trade-off: intrinsic models are more transparent but may be less accurate on complex problems. Post-hoc methods preserve accuracy while adding a layer of explanation.

2.2 Global vs. Local Explanations

Explainability can operate at two levels:

Global explanations. These describe the overall behavior of the model. What features are most important generally? What patterns does the model rely on across all predictions? Global explanations help you understand the model as a whole.

Local explanations. These explain a single prediction. Why did this specific loan get denied? What features drove this particular fraud flag? Local explanations help you understand individual cases.

Both are important. Global explanations help with model validation and trust. Local explanations help with individual accountability and debugging.

2.3 Common Explainability Techniques

| Technique | What It Does | Best For |

|---|---|---|

| Feature Importance | Ranks which inputs most influence predictions | Understanding overall model drivers |

| LIME | Creates a simple, interpretable model around a single prediction | Local explanations; explaining individual decisions |

| SHAP | Assigns each feature a value representing its contribution to a prediction | Local and global explanations; mathematically grounded |

| Decision Trees | Intrinsically interpretable models; shows decision paths | When transparency is the priority |

| Attention Maps | For vision or language models; shows what the model “looked at” | Understanding what parts of an image or text influenced the decision |

| Counterfactuals | Shows what would need to change to get a different outcome | Actionable insights; “what if” scenarios |

Section 3: Explainability Techniques in Depth

3.1 Feature Importance

Feature importance ranks input variables by how much they influence the model’s predictions. For a credit model, feature importance might show that income is the most important factor, followed by debt-to-income ratio, followed by credit score.

This is a global explanation—it tells you what matters overall. It does not explain why a specific applicant was denied, but it helps you understand the model’s priorities.

3.2 LIME (Local Interpretable Model-Agnostic Explanations)

LIME works by creating a simple, interpretable model that approximates the complex model’s behavior around a specific prediction. For a loan denial, LIME might show that the primary factors were high debt-to-income ratio and a recent missed payment.

LIME is “model-agnostic”—it works with any AI model, regardless of architecture. It provides local explanations for individual predictions.

3.3 SHAP (Shapley Additive Explanations)

SHAP is based on game theory and assigns each feature a value representing its contribution to a prediction. For a house price prediction, SHAP might show that location added $50,000 to the price, while age subtracted $10,000.

SHAP is mathematically rigorous and provides both local and global explanations. It is widely used in regulated industries because it offers consistent, theoretically grounded explanations.

3.4 Attention Maps

For vision models (CNNs) and language models (transformers), attention maps show what parts of the input the model focused on. In an image recognition model, an attention map might highlight the cat’s face rather than the background. In a text model, it might highlight key phrases like “not satisfied” in a customer review.

Attention maps are intuitive and easy to visualize, making them popular for debugging and building trust.

3.5 Counterfactual Explanations

Counterfactual explanations answer the question: what would need to change for the outcome to be different? For a loan denial, a counterfactual might show: if your debt-to-income ratio were 5% lower, the loan would have been approved.

Counterfactuals are actionable—they tell users exactly what they could change to get a different result. This is far more useful than simply saying “your application was denied.”

Section 4: Why Explainable AI Matters Across Industries

4.1 Financial Services

In finance, explainability is not optional—it is required. Regulators demand that credit decisions be explainable and non-discriminatory. A bank cannot simply say “the AI said no.” It must provide specific reasons: debt-to-income ratio too high, insufficient credit history, recent missed payments.

Explainability also helps with fairness. If a model is discriminating against protected groups, explanations will reveal it. Feature importance might show that zip code—a proxy for race—is a major factor, flagging potential bias.

4.2 Healthcare

In healthcare, the stakes are life and death. A doctor will not trust an AI diagnosis without understanding the reasoning. If an AI flags a suspicious spot on a mammogram, the radiologist needs to know what features the AI saw—texture, shape, contrast—to validate the finding.

Explainability also enables collaboration. The AI and the clinician work together, with explanations helping the clinician understand the AI’s reasoning and decide whether to trust it.

4.3 Legal and Compliance

AI is increasingly used in legal settings—sentencing recommendations, bail decisions, contract review. Without explainability, these applications are ethically and legally untenable. A judge cannot base a sentencing decision on a black-box recommendation. Explainability provides the transparency that due process requires.

4.4 Human Resources

AI systems screen resumes, predict employee performance, and identify high-potential candidates. Without explainability, these systems risk perpetuating bias. Explainability reveals whether the model is relying on legitimate factors (skills, experience) or inappropriate proxies (gender, age, zip code).

4.5 Manufacturing and Industrial AI

When AI predicts equipment failure, maintenance teams need to know why. Is the model flagging vibration? Temperature? Acoustic anomalies? Explainability helps maintenance teams diagnose problems and take appropriate action, rather than blindly trusting the AI.

Section 5: Challenges in Explainable AI

5.1 The Accuracy-Explainability Trade-Off

The most accurate models—deep neural networks with billions of parameters—are also the least explainable. Simpler models like decision trees are transparent but often less accurate on complex problems.

This creates a tension. Organizations want both accuracy and explainability. The solution is often a hybrid approach: use explainability techniques (like SHAP) to explain complex models, or use simpler models when transparency is critical.

5.2 Explanation Complexity

Even with explainability techniques, explanations can be complex. A SHAP analysis might show that dozens of features contributed to a decision, making the explanation difficult to digest. The challenge is to provide explanations that are both accurate and understandable to non-experts.

5.3 Trade Secrets and Proprietary Models

Some organizations resist explainability because they consider their AI models proprietary. Revealing how the model works could expose intellectual property. However, regulatory pressure is pushing toward transparency, and many organizations are finding ways to provide explanations without revealing core IP.

5.4 User Understanding

An explanation is only useful if the user understands it. A technical SHAP plot is meaningless to a loan applicant. Explainable AI must consider the audience—providing different levels of explanation for regulators, developers, and end users.

Section 6: How MHTECHIN Helps with Explainable AI

Building AI systems that are both powerful and explainable requires expertise in both model development and interpretability techniques. MHTECHIN helps organizations design, implement, and deploy explainable AI systems that meet regulatory requirements and build trust.

6.1 For Strategy and Governance

MHTECHIN helps organizations establish AI governance frameworks that include explainability requirements. For each use case, we define:

- What level of explainability is required? Global, local, or both?

- Who is the audience? Regulators, developers, end users?

- What regulatory requirements apply? EU AI Act, GDPR, sector-specific rules?

6.2 For Model Selection and Development

When accuracy and transparency are both important, MHTECHIN helps select the right approach:

- Intrinsic explainability. Use interpretable models (decision trees, linear models) when transparency is the priority.

- Post-hoc explainability. Use complex models with explanation techniques (SHAP, LIME) when accuracy requires it.

- Hybrid approaches. Combine simple models for critical decisions with complex models for support.

6.3 For Explanation Implementation

MHTECHIN implements explainability techniques tailored to your models and users:

- SHAP and LIME. For model-agnostic explanations across complex models.

- Attention maps. For vision and language models.

- Counterfactuals. For actionable insights.

- Custom dashboards. For presenting explanations to different audiences.

6.4 For Testing and Validation

MHTECHIN helps organizations validate that explanations are accurate and meaningful. We test:

- Fidelity. Do explanations accurately reflect model behavior?

- Stability. Are explanations consistent across similar inputs?

- Usability. Do target users understand the explanations?

6.5 The MHTECHIN Approach

MHTECHIN’s explainable AI practice is grounded in the principle that transparency builds trust. The team combines deep technical expertise with an understanding of regulatory requirements and user needs. For organizations deploying AI in regulated or high-stakes environments, MHTECHIN provides the expertise to build systems that are both powerful and transparent.

Section 7: Frequently Asked Questions

7.1 Q: What is Explainable AI in simple terms?

A: Explainable AI (XAI) is a set of methods that help humans understand how AI models make decisions. Instead of treating AI as a black box, XAI provides insights into why a model made a particular prediction—showing which factors were most important and how they influenced the outcome.

7.2 Q: Why is Explainable AI important?

A: Explainable AI is essential for trust, accountability, regulatory compliance, and debugging. Without explainability, organizations cannot verify that AI is fair, cannot fix errors, and cannot comply with regulations like the EU AI Act or GDPR’s right to explanation.

7.3 Q: What is the difference between intrinsic and post-hoc explainability?

A: Intrinsic explainability comes from models that are inherently interpretable—like decision trees or linear regression. Post-hoc explainability applies to complex models (like neural networks) after they are trained, using techniques like SHAP or LIME to approximate explanations.

7.4 Q: What is SHAP?

A: SHAP (Shapley Additive Explanations) is a mathematically rigorous technique that assigns each feature a value representing its contribution to a prediction. It provides both local explanations (for individual predictions) and global explanations (for overall model behavior).

7.5 Q: What is LIME?

A: LIME (Local Interpretable Model-Agnostic Explanations) creates a simple, interpretable model around a single prediction to explain why the complex model made that decision. It is model-agnostic—it works with any AI model.

7.6 Q: Can Explainable AI help detect bias?

A: Yes. Explainability techniques can reveal whether a model is relying on inappropriate factors—like gender, race, or zip code—to make decisions. This allows organizations to identify and correct bias before deployment.

7.7 Q: Is there a trade-off between accuracy and explainability?

A: Often, yes. The most accurate models (deep neural networks) are the least explainable. Simpler models (decision trees) are more transparent but may be less accurate. However, post-hoc explainability techniques allow you to have both—high accuracy with explanations.

7.8 Q: What are counterfactual explanations?

A: Counterfactual explanations answer the question: what would need to change for the outcome to be different? For a loan denial, a counterfactual might show that if your debt-to-income ratio were 5% lower, the loan would have been approved. These are highly actionable for users.

7.9 Q: How does the EU AI Act affect explainability?

A: The EU AI Act requires that high-risk AI systems be transparent and explainable. Organizations must provide meaningful explanations of how decisions are made, particularly for applications in employment, credit, healthcare, and law enforcement.

7.10 Q: How does MHTECHIN help with Explainable AI?

A: MHTECHIN helps organizations design, implement, and deploy explainable AI systems. We provide strategy, model selection, explanation implementation, testing, and validation—ensuring your AI is both powerful and transparent.

Section 8: Conclusion—Opening the Black Box

For years, the AI industry operated on a simple bargain: we will give you powerful models, but you cannot look inside. Accuracy was the priority; transparency was the cost. In 2026, that bargain is broken.

Regulators demand explanations. Customers demand transparency. And organizations are realizing that trust—not just accuracy—is essential for AI adoption. An AI that cannot explain itself is an AI that cannot be trusted.

Explainable AI is not about replacing black-box models with simpler ones. It is about opening the black box—providing insights into how models work, why they make decisions, and how to improve them. It is about building AI that is not only powerful but also accountable, fair, and trustworthy.

For organizations deploying AI, the path forward is clear: build transparency into your systems from the start. Choose models that balance accuracy with explainability. Use post-hoc techniques to explain complex decisions. And work with partners who understand that transparency is not a constraint—it is a feature.

Ready to open the black box? Explore MHTECHIN’s Explainable AI services at www.mhtechin.com. From strategy through implementation, our team helps you build AI that is both powerful and transparent.

This guide is brought to you by MHTECHIN—helping organizations build AI systems that are accurate, accountable, and explainable. For personalized guidance on Explainable AI strategy or implementation, reach out to the MHTECHIN team today.

Leave a Reply