Introduction

Artificial intelligence is transforming how businesses operate. It makes decisions faster than humans. It processes data at unimaginable scale. It uncovers patterns no human could see. But with this power comes responsibility.

An AI system that denies loans without explanation. A hiring algorithm that screens out qualified candidates from certain backgrounds. A chatbot that generates harmful content. These are not hypothetical risks—they are real failures that have cost organizations millions in fines, lawsuits, and reputational damage.

The difference between AI that creates value and AI that creates harm often comes down to one thing: ethics. Organizations that build AI on a foundation of ethical principles are better positioned to avoid pitfalls, earn trust, and innovate sustainably. Those that treat ethics as an afterthought eventually pay the price.

This article outlines the core ethical AI principles every business should adopt—not as abstract ideals, but as practical commitments that guide development, deployment, and governance. Whether you are a CEO setting strategy, a product manager building AI features, or a data scientist writing code, these principles provide a framework for responsible innovation.

For a foundational understanding of how to govern AI systems effectively, you may find our guide on AI Governance Frameworks for Enterprises helpful as a starting point.

Throughout, we will highlight how MHTECHIN helps organizations translate ethical principles into practice—embedding ethics into AI systems from design through deployment.

Section 1: Why Ethical AI Matters

1.1 The Stakes Are Higher Than Ever

AI is no longer experimental. It is making consequential decisions that affect people’s lives:

- Whether someone gets a loan or is denied

- Whether a job applicant moves forward or is filtered out

- Whether a patient receives an accurate diagnosis

- Whether a driver avoids an accident

- Whether a customer receives fair treatment

When AI makes mistakes in these domains, the consequences are not minor. They are life-altering. Ethical AI is not a nice-to-have—it is a necessity.

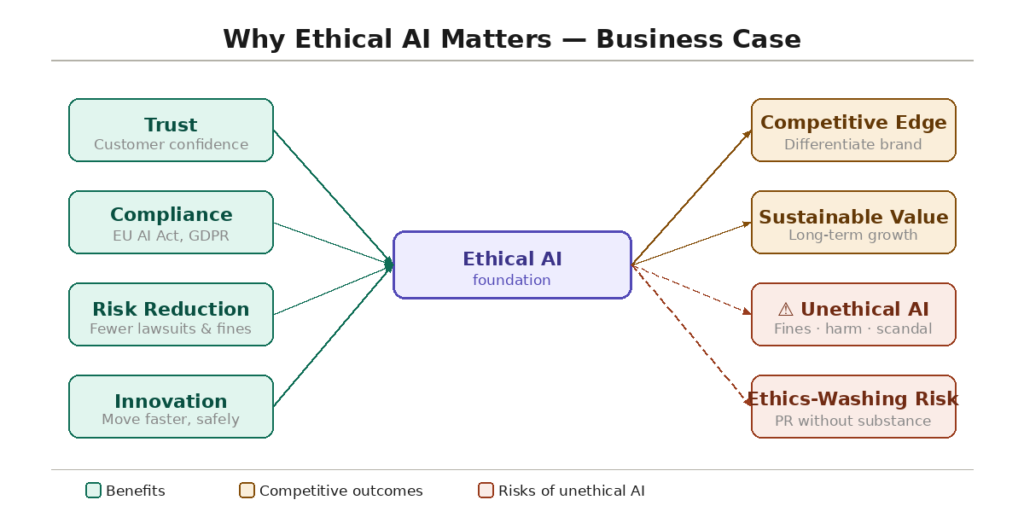

1.2 The Business Case for Ethical AI

Beyond moral obligation, ethical AI makes business sense:

Trust. Customers, employees, and partners are more likely to engage with organizations they trust. A single ethical failure can destroy years of trust.

Regulatory compliance. Regulators are demanding ethical AI. The EU AI Act, GDPR, and sector-specific regulations require fairness, transparency, and accountability. Non-compliance carries significant fines.

Risk reduction. Ethical failures lead to lawsuits, regulatory actions, and reputational damage. Proactive ethics reduces these risks.

Innovation enablement. Organizations with clear ethical guidelines can move faster. They have confidence that their AI systems are safe and responsible.

Competitive advantage. In a world where AI failures make headlines, ethical AI is a differentiator. Organizations that prioritize ethics stand out.

1.3 The Cost of Unethical AI

The examples are numerous:

- A hiring algorithm systematically discriminated against women, leading to a multimillion-dollar settlement

- A credit model denied loans to qualified applicants based on zip code (a proxy for race), triggering regulatory action

- A chatbot generated harmful content, causing public outrage and brand damage

- A medical AI made inaccurate recommendations because it was trained on non-representative data

These failures share a common theme: organizations did not embed ethical principles into their AI systems from the start.

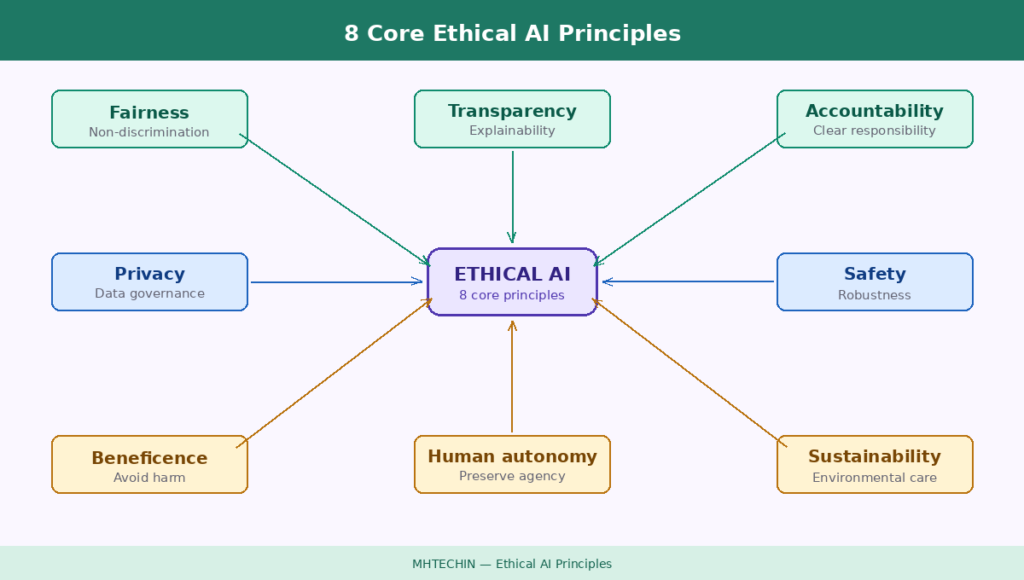

Section 2: Core Ethical AI Principles

2.1 Fairness and Non-Discrimination

The Principle. AI systems should treat all individuals fairly, without discrimination based on race, gender, age, disability, or other protected characteristics.

What It Means in Practice. Fairness does not mean treating everyone identically—it means ensuring that AI systems do not produce systematically worse outcomes for certain groups. This requires:

- Testing for disparate impact. Does the model produce different outcomes across demographic groups?

- Bias detection. Are there patterns in training data or model behavior that reflect historical discrimination?

- Mitigation. When bias is detected, organizations must take steps to reduce it—through data changes, model adjustments, or post-processing.

- Ongoing monitoring. Fairness is not a one-time check. Models must be monitored continuously for emerging disparities.

Common Pitfalls. Assuming a model is fair because it does not explicitly use protected attributes. Proxy variables—like zip code for race—can encode discrimination. Fairness must be tested, not assumed.

2.2 Transparency and Explainability

The Principle. AI systems should be transparent. People affected by AI decisions should understand how those decisions are made and have access to meaningful explanations.

What It Means in Practice. Transparency is not all-or-nothing. It requires:

- Documentation. Clear records of what the AI does, what data it uses, and how it was developed.

- Explainability. For decisions that affect individuals, provide explanations that are meaningful to the recipient—not just technical jargon.

- Disclosure. When AI is used in consequential decisions, people should know they are interacting with AI.

- Auditability. Systems should be designed so that decisions can be reviewed and audited after the fact.

Common Pitfalls. Providing explanations that are technically accurate but incomprehensible to users. A SHAP plot is not a useful explanation for a loan applicant. Explainability must be tailored to the audience.

2.3 Accountability and Responsibility

The Principle. There should be clear accountability for AI systems. Someone—not the AI—is responsible for outcomes.

What It Means in Practice. Accountability requires:

- Clear ownership. Every AI system must have a designated business owner responsible for its outcomes.

- Human oversight. For high-risk decisions, humans must be in the loop—able to review, override, and intervene.

- Escalation paths. Clear processes for when AI makes mistakes or produces unexpected results.

- Remediation. Mechanisms for individuals to challenge AI decisions and seek redress.

Common Pitfalls. Saying “the AI did it” as a way to avoid accountability. Organizations cannot delegate responsibility to algorithms. Humans remain accountable.

2.4 Privacy and Data Governance

The Principle. AI systems should respect privacy and handle data responsibly. Individuals should have control over their data and understand how it is used.

What It Means in Practice. Privacy in AI requires:

- Data minimization. Collect only the data needed for the task—no more.

- Purpose limitation. Use data only for the purposes for which it was collected.

- Consent. When required, obtain meaningful consent for data use.

- Anonymization. Where possible, use techniques that protect individual privacy.

- Security. Protect data from breaches, unauthorized access, and misuse.

Common Pitfalls. Using sensitive data without proper safeguards. Assuming that anonymization alone is sufficient (re-identification risks exist). Ignoring data residency requirements.

2.5 Safety and Robustness

The Principle. AI systems should be safe, reliable, and robust. They should perform as expected under normal conditions and degrade gracefully when faced with unexpected inputs.

What It Means in Practice. Safety and robustness require:

- Testing. Rigorous testing before deployment, including edge cases and adversarial inputs.

- Monitoring. Continuous monitoring of performance, drift, and anomalies.

- Fallbacks. Clear procedures for when AI fails or produces uncertain results.

- Security. Protection against adversarial attacks, manipulation, and tampering.

Common Pitfalls. Deploying AI without adequate testing. Assuming that performance in testing will match production. Ignoring model drift over time.

2.6 Beneficence and Non-Maleficence (Do Good, Avoid Harm)

The Principle. AI systems should be designed to benefit people and society, and should avoid causing harm.

What It Means in Practice. This principle, drawn from medical ethics, requires:

- Positive impact. Consider the intended benefits of AI. Are they aligned with human flourishing?

- Harm prevention. Proactively identify potential harms—to individuals, communities, and society.

- Unintended consequences. Consider second-order effects. An AI that optimizes one metric may cause harm in another.

- Dual-use considerations. Could the technology be used for harmful purposes?

Common Pitfalls. Focusing only on intended benefits without considering potential harms. Optimizing narrow metrics without regard for broader impacts.

2.7 Human Autonomy and Agency

The Principle. AI should augment human capabilities, not replace human judgment in ways that diminish autonomy.

What It Means in Practice. Human autonomy requires:

- Meaningful human control. For critical decisions, humans should retain the ability to understand, oversee, and override.

- Choice. Where possible, individuals should have the ability to opt out of AI-based decisions or seek human review.

- Augmentation, not replacement. AI is most powerful when it augments human judgment, not replaces it entirely.

Common Pitfalls. Automating decisions that should remain human. Designing systems that make it difficult or impossible for humans to intervene.

2.8 Sustainability

The Principle. AI systems should be developed and deployed in environmentally sustainable ways.

What It Means in Practice. AI’s environmental footprint is significant. Sustainability requires:

- Efficient architectures. Choose models and techniques that minimize energy consumption.

- Green computing. Use renewable energy for training and inference where possible.

- Consider necessity. Not every problem requires a massive AI model. Choose the right tool for the task.

- Lifecycle assessment. Consider environmental impact across the AI lifecycle.

Common Pitfalls. Training massive models for tasks that could be accomplished with simpler, more efficient approaches.

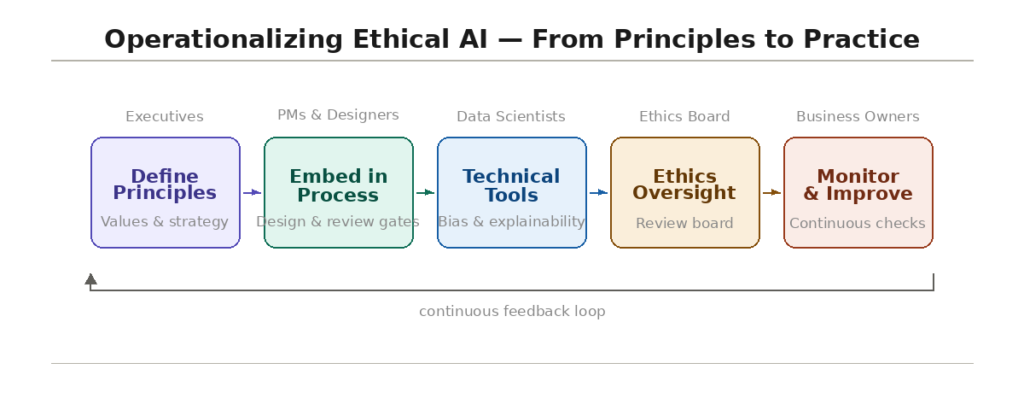

Section 3: Operationalizing Ethical Principles

3.1 From Principles to Practice

Ethical principles are meaningless if they remain abstract. Operationalizing ethics requires:

- Embedding ethics into development processes. Ethical considerations should be part of requirements, design reviews, testing, and deployment gates.

- Creating accountability. Assign responsibility for ethical outcomes. Make ethics part of job descriptions and performance reviews.

- Providing training. Everyone involved in AI—from executives to engineers—needs to understand ethical principles and how to apply them.

- Establishing oversight. A cross-functional ethics committee can review high-risk use cases, provide guidance, and escalate issues.

- Building technical tools. Develop or adopt tools for bias detection, explainability, and monitoring.

3.2 Ethical AI by Role

| Role | How to Operationalize Ethics |

|---|---|

| Executives | Set the tone from the top. Allocate resources for ethical AI. Hold teams accountable. |

| Product Managers | Define ethical requirements. Include ethics in user stories. Consider edge cases. |

| Data Scientists | Test for bias. Document data sources and decisions. Use explainable techniques where appropriate. |

| Engineers | Build monitoring and auditing capabilities. Implement security measures. Plan for fallbacks. |

| Legal and Compliance | Interpret regulations. Develop policies. Conduct risk assessments. |

| Business Owners | Own outcomes. Ensure AI is used responsibly. Escalate issues. |

3.3 Ethical AI Review Boards

Many organizations establish ethics review boards to:

- Review high-risk AI use cases before development

- Provide guidance on ethical trade-offs

- Escalate issues when principles conflict

- Monitor deployed systems for ethical issues

Review boards should include diverse perspectives—technical, legal, business, and, where relevant, external experts and community representatives.

Section 4: Applying Ethical Principles to Real-World AI

4.1 Hiring AI

Fairness. Ensure the AI does not discriminate based on race, gender, age, or disability. Test for disparate impact. Use anonymized data where possible.

Transparency. Candidates should know when AI is used in hiring decisions. They should have the ability to request human review.

Accountability. Clear ownership of outcomes. Mechanisms for candidates to challenge decisions.

Safety. Ensure the AI does not inadvertently reject qualified candidates due to flawed proxies.

4.2 Credit Scoring

Fairness. Test for discrimination. Understand whether proxies like zip code encode historical bias.

Explainability. Provide meaningful explanations to applicants. Counterfactuals (“if your debt-to-income ratio were lower, you would have been approved”) are particularly useful.

Accountability. Human review for denials. Clear appeals process.

Privacy. Use only relevant data. Protect sensitive information.

4.3 Healthcare AI

Beneficence. Prioritize patient outcomes. Validate models clinically.

Safety. Rigorous testing. Continuous monitoring. Fallback procedures.

Transparency. Clinicians must understand AI reasoning to trust it. Provide explanations appropriate to clinical context.

Human autonomy. AI augments clinical judgment—does not replace it.

4.4 Customer Service Chatbots

Transparency. Customers should know they are interacting with AI.

Safety. Guardrails to prevent harmful outputs. Escalation to humans when needed.

Accountability. Clear ownership of chatbot responses. Mechanisms for customers to reach humans.

Privacy. Secure handling of customer data.

4.5 Content Moderation

Fairness. Ensure content moderation AI does not disproportionately flag certain communities.

Transparency. Clear policies. Ability to appeal decisions.

Human autonomy. Human review for high-stakes decisions (account bans, content removal).

Beneficence. Balance harm prevention with freedom of expression.

Section 5: Challenges in Ethical AI

5.1 Trade-Offs Between Principles

Ethical principles sometimes conflict. Fairness and accuracy can trade off. Transparency and privacy can conflict. Organizations must develop frameworks for navigating trade-offs transparently.

5.2 Measuring Fairness

There is no single definition of fairness. Different definitions (demographic parity, equal opportunity, individual fairness) can conflict. Organizations must choose definitions appropriate to their context and be transparent about their choices.

5.3 Keeping Pace with Technology

AI evolves rapidly. New capabilities create new ethical challenges. Organizations must build processes that are adaptable—not rigid rules that become obsolete.

5.4 Cultural and Regional Differences

Ethical norms vary across cultures and regions. Organizations operating globally must navigate these differences while maintaining core principles.

5.5 Greenwashing and Ethics-Washing

Some organizations adopt ethical principles as public relations exercises without meaningful implementation. Authentic ethics requires investment, accountability, and transparency.

Section 6: How MHTECHIN Helps with Ethical AI

Translating ethical principles into practice requires expertise in both AI technology and ethics implementation. MHTECHIN helps organizations embed ethics into their AI systems from design through deployment.

6.1 For Principle Definition and Strategy

MHTECHIN helps organizations:

- Define ethical principles. Tailored to your values, industry, and regulatory context.

- Establish governance structures. Ethics review boards, accountability frameworks.

- Develop policies. Translate principles into actionable policies and standards.

6.2 For Ethical AI Implementation

MHTECHIN provides technical capabilities for ethical AI:

- Bias detection. Tools and processes to test models for disparate impact.

- Explainability. SHAP, LIME, counterfactuals, and other techniques tailored to your audience.

- Fairness mitigation. Techniques to reduce bias while preserving accuracy.

- Monitoring. Continuous oversight for fairness drift and performance degradation.

6.3 For Training and Culture

MHTECHIN trains teams on ethical AI:

- Workshops. For executives, product managers, data scientists, and engineers.

- Case studies. Real-world examples of ethical successes and failures.

- Practical tools. How to test for bias, generate explanations, and build responsible AI.

6.4 For Regulatory Compliance

MHTECHIN helps organizations navigate the regulatory landscape:

- EU AI Act compliance. High-risk classification, documentation, transparency.

- GDPR and right to explanation. Implement explainability for automated decisions.

- Sector-specific requirements. Financial services, healthcare, employment.

6.5 The MHTECHIN Approach

MHTECHIN’s ethical AI practice combines technical expertise with deep understanding of ethics and regulation. The team helps organizations move beyond principles to practice—embedding ethics into the way AI is built, deployed, and governed.

Section 7: Frequently Asked Questions

7.1 Q: What are the core ethical AI principles?

A: The core principles include fairness and non-discrimination, transparency and explainability, accountability and responsibility, privacy and data governance, safety and robustness, beneficence and non-maleficence, human autonomy and agency, and sustainability.

7.2 Q: Why is ethical AI important for businesses?

A: Ethical AI builds trust, reduces regulatory risk, prevents costly failures, enables innovation, and provides competitive advantage. Unethical AI leads to lawsuits, fines, reputational damage, and loss of customer confidence.

7.3 Q: How do you ensure AI is fair?

A: Fairness requires testing models for disparate impact across demographic groups, detecting bias in training data, mitigating bias when found, and monitoring continuously. It also requires understanding that fairness has multiple definitions—organizations must choose appropriate ones for their context.

7.4 Q: What is the difference between AI ethics and AI governance?

A: AI ethics defines the principles—what we should do. AI governance operationalizes those principles through policies, processes, and controls—how we ensure we do it. Ethics is the “what”; governance is the “how.”

7.5 Q: How do you make AI explainable?

A: Explainability can be intrinsic (using interpretable models like decision trees) or post-hoc (using techniques like SHAP, LIME, or counterfactuals). The explanation must be tailored to the audience—a SHAP plot is not useful for a loan applicant; a simple counterfactual explanation is.

7.6 Q: Can AI be ethical and still be profitable?

A: Yes. Ethical AI reduces risk, builds trust, and enables sustainable innovation. Organizations that cut corners on ethics often pay later in fines, lawsuits, and reputational damage. Ethical AI is not a cost—it is an investment.

7.7 Q: How do you handle trade-offs between ethical principles?

A: Ethical principles sometimes conflict. Organizations need frameworks for navigating trade-offs transparently. When fairness and accuracy conflict, for example, the choice should be documented and justified based on context and values.

7.8 Q: What is ethics-washing?

A: Ethics-washing is adopting ethical principles as a public relations exercise without meaningful implementation. Authentic ethics requires investment, accountability, and transparency—not just statements of principle.

7.9 Q: How do regulations like the EU AI Act affect ethical AI?

A: The EU AI Act codifies many ethical principles into law, requiring fairness, transparency, human oversight, and accountability for high-risk AI systems. Ethical AI is no longer just good practice—it is a legal requirement.

7.10 Q: How does MHTECHIN help with ethical AI?

A: MHTECHIN helps organizations define ethical principles, establish governance structures, implement technical capabilities (bias detection, explainability, monitoring), train teams, and ensure regulatory compliance. We translate principles into practice.

Section 8: Conclusion—Ethics as a Competitive Advantage

Ethical AI is not a constraint on innovation—it is a foundation for sustainable innovation. Organizations that build AI on a foundation of fairness, transparency, accountability, and respect for human autonomy are better positioned to earn trust, avoid costly failures, and create lasting value.

The principles outlined in this guide—fairness, transparency, accountability, privacy, safety, beneficence, human autonomy, and sustainability—provide a framework for responsible AI. But principles alone are not enough. They must be operationalized: embedded into processes, supported by technical tools, and reinforced by culture.

For organizations serious about AI, ethics is not an afterthought. It is a competitive advantage. In a world where AI failures make headlines, organizations that prioritize ethics stand out. They earn the trust of customers, regulators, and the public. And they build AI that truly serves people.

Ready to build AI that is both powerful and principled? Explore MHTECHIN’s ethical AI services at www.mhtechin.com. From principle definition through implementation, our team helps you build AI you can trust.

This guide is brought to you by MHTECHIN—helping organizations build AI systems that are ethical, responsible, and trustworthy. For personalized guidance on ethical AI strategy or implementation, reach out to the MHTECHIN team today.

Leave a Reply