Introduction

Artificial intelligence is no longer a pilot project in most enterprises. It is embedded in customer service, credit decisions, hiring processes, supply chain optimization, and product development. But with this scale comes risk. A biased hiring algorithm. An unexplainable credit denial. A model that drifts into inaccuracy. A regulatory fine. Reputational damage.

Organizations are waking up to a hard truth: AI without governance is a liability.

AI governance is the framework of policies, processes, and controls that ensure AI systems are developed and deployed responsibly, ethically, and in compliance with regulations. It is not about slowing down innovation—it is about enabling innovation that is trustworthy, auditable, and sustainable.

This article explains what AI governance is, why it matters, what a robust framework includes, and how enterprises can implement governance that balances risk and innovation. Whether you are a C-suite leader, a compliance officer, a data scientist, or a business unit manager, this guide will help you understand how to govern AI effectively.

For a foundational understanding of how to make AI models transparent and accountable, you may find our guide on Explainable AI (XAI): Making Black-Box Models Transparent helpful as a starting point.

Throughout, we will highlight how MHTECHIN helps enterprises design and implement AI governance frameworks that enable responsible innovation at scale.

Section 1: What Is AI Governance?

1.1 A Simple Definition

AI governance is the system of policies, processes, roles, and controls that ensure AI systems are developed, deployed, and managed in a way that is responsible, ethical, compliant, and aligned with organizational values.

Think of it as the guardrails for AI innovation. Governance does not prevent organizations from using AI—it ensures they use AI safely, transparently, and accountably.

1.2 Why AI Governance Matters

AI governance has become a business imperative for several converging reasons:

Regulatory pressure. In 2026, AI regulation is no longer theoretical. The EU AI Act imposes binding requirements on high-risk AI systems. Sector-specific regulators in finance, healthcare, and employment are demanding transparency, explainability, and fairness. Non-compliance carries fines, legal liability, and reputational damage.

Reputational risk. A single AI failure—a biased hiring tool, a discriminatory loan algorithm, a safety-critical error—can destroy years of trust. Customers, investors, and the public hold organizations accountable for AI harms.

Operational risk. AI systems drift. They make mistakes. They can be manipulated. Without governance, organizations lack visibility into model performance, leading to costly failures.

Ethical responsibility. Beyond compliance, organizations have an ethical obligation to ensure that AI systems do not cause harm. Governance operationalizes ethical principles.

Competitive advantage. Organizations with strong AI governance can move faster. They have clear processes, documented controls, and stakeholder confidence. They are not constantly firefighting AI failures.

1.3 The Cost of Poor Governance

The consequences of inadequate AI governance are real:

- Regulatory fines. Under the EU AI Act, fines can reach up to €30 million or 6% of global annual turnover.

- Lawsuits. Discriminatory AI has led to class-action lawsuits and settlements.

- Operational losses. A model that drifts into inaccuracy can cause significant business losses.

- Reputational damage. Public AI failures erode trust and brand value.

- Wasted investment. AI projects that cannot pass governance reviews are abandoned after significant investment.

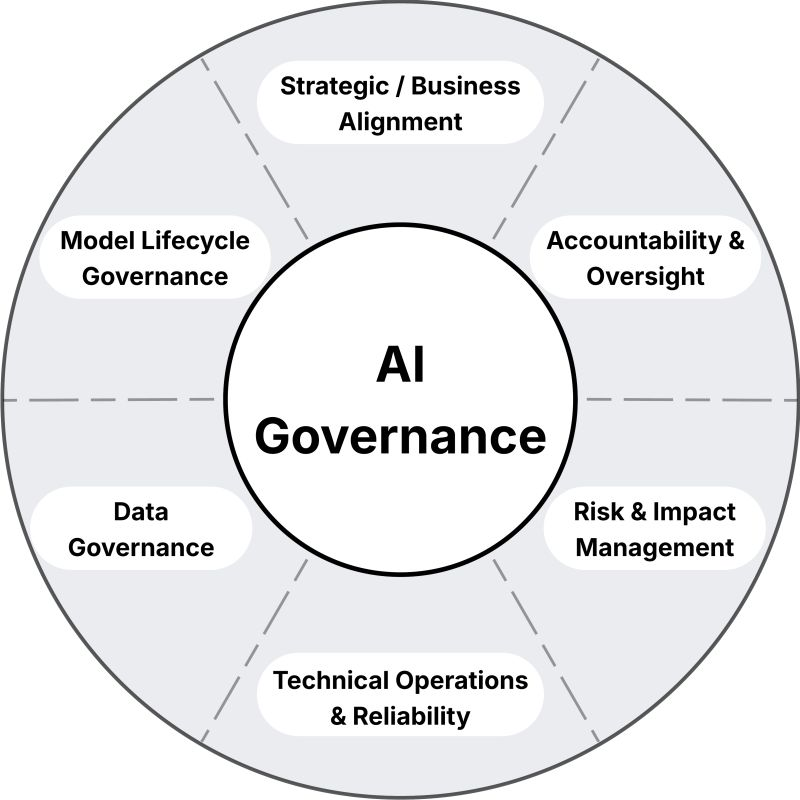

Section 2: Key Components of an AI Governance Framework

2.1 Governance Structure: Roles and Responsibilities

Effective governance starts with clear accountability. Who is responsible for what?

| Role | Responsibilities |

|---|---|

| Board of Directors | Oversight of AI strategy and risk; approval of high-risk AI use cases |

| AI Governance Committee | Cross-functional body (legal, compliance, tech, business) that reviews and approves AI initiatives |

| Chief AI Officer / AI Ethics Officer | Executive responsible for AI governance, strategy, and risk management |

| Model Risk Management | Technical team that validates models, monitors performance, and manages risk |

| Data Scientists / ML Engineers | Develop models in compliance with governance policies |

| Business Unit Owners | Accountable for AI outcomes within their domain |

| Internal Audit | Independent assessment of governance effectiveness |

2.2 AI Policy Framework

A comprehensive AI governance framework is built on documented policies that define:

- Acceptable use. What AI use cases are permitted? Which are prohibited?

- Risk classification. How are AI systems categorized by risk level? (e.g., low, medium, high, prohibited)

- Development standards. What technical standards must models meet? (explainability, fairness, robustness)

- Data governance. What data can be used? How is data privacy ensured?

- Vendor management. How are third-party AI systems vetted and monitored?

- Incident response. How are AI failures reported and remediated?

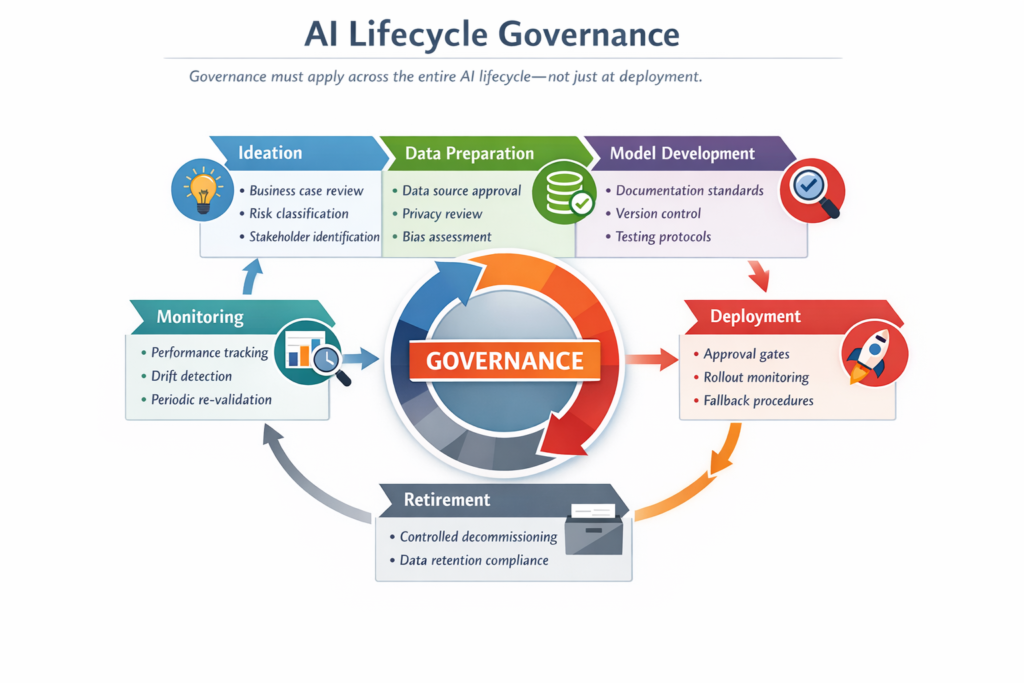

2.3 AI Lifecycle Governance

Governance must apply across the entire AI lifecycle—not just at deployment.

| Phase | Governance Activities |

|---|---|

| Ideation | Business case review; risk classification; stakeholder identification |

| Data Preparation | Data source approval; privacy review; bias assessment |

| Model Development | Documentation standards; version control; testing protocols |

| Validation | Independent model validation; fairness testing; explainability review |

| Deployment | Approval gates; rollout monitoring; fallback procedures |

| Monitoring | Performance tracking; drift detection; periodic re-validation |

| Retirement | Controlled decommissioning; data retention compliance |

2.4 Risk Classification Framework

Not all AI systems require the same level of governance. A risk-based approach allocates scrutiny where it matters most.

| Risk Level | Description | Examples | Governance Requirements |

|---|---|---|---|

| Prohibited | Unacceptable risk; not permitted | Social scoring, real-time biometric surveillance | Not permitted |

| High Risk | Significant impact on safety, rights, opportunities | Credit scoring, hiring, medical diagnosis, critical infrastructure | Full governance: impact assessment, transparency, human oversight, post-market monitoring |

| Limited Risk | Moderate impact; transparency required | Customer service chatbots, recommendation engines | Transparency obligations; user informed of AI |

| Minimal Risk | Low impact | Spam filters, inventory optimization | Light governance; documented but not heavily scrutinized |

2.5 Documentation and Auditability

Governance requires evidence. Organizations must maintain:

- Model inventory. A complete catalog of all AI systems in production, including purpose, risk classification, owner, and status.

- Development documentation. Records of data sources, preprocessing, model selection, training, and testing.

- Validation reports. Independent assessments of model performance, fairness, and robustness.

- Monitoring logs. Ongoing performance tracking, drift detection, and incident records.

- Audit trails. Who approved what, when, and why.

2.6 Human Oversight and Accountability

For high-risk AI systems, human oversight is essential. Governance must define:

- Human-in-the-loop. Decisions reviewed by humans before action.

- Human-on-the-loop. Humans monitor system behavior and can intervene.

- Human-out-of-the-loop. Fully automated; reserved for low-risk applications.

Accountability also means clear ownership. Every AI system must have a designated business owner responsible for its outcomes.

Section 3: Regulatory Landscape for AI Governance

3.1 EU AI Act

The EU AI Act is the world’s first comprehensive AI regulation. It classifies AI systems by risk and imposes requirements accordingly.

For high-risk AI systems, requirements include:

- Risk management system

- High-quality training data (relevant, representative, error-free)

- Technical documentation and record-keeping

- Transparency and explainability

- Human oversight

- Accuracy, robustness, and cybersecurity

- Post-market monitoring

Non-compliance can result in fines up to €30 million or 6% of global annual turnover.

3.2 GDPR and the Right to Explanation

GDPR grants individuals the right to meaningful information about the logic involved in automated decision-making. Organizations must be able to explain how AI systems make decisions that affect individuals.

3.3 Sector-Specific Regulations

| Sector | Key Requirements |

|---|---|

| Financial Services | Model risk management (SR 11-7 in US, similar frameworks globally); explainability; fairness testing |

| Healthcare | FDA oversight for AI-based medical devices; HIPAA compliance for data; clinical validation |

| Employment | EEOC guidance on algorithmic fairness; adverse impact analysis |

| Insurance | State-level regulations on algorithmic pricing; transparency requirements |

3.4 Emerging Standards

Industry standards are evolving rapidly:

- ISO/IEC 42001 – AI management system standard

- NIST AI Risk Management Framework – Voluntary framework for managing AI risks

- OECD AI Principles – International guidelines for responsible AI

Section 4: Implementing AI Governance in Practice

4.1 Start with a Risk Assessment

Before implementing governance, understand your current state:

- What AI systems are in use? (Often organizations discover shadow AI they did not know about.)

- What risk levels do they represent?

- What gaps exist in documentation, validation, or monitoring?

A comprehensive AI inventory is the foundation of governance.

4.2 Build Cross-Functional Governance Structures

AI governance cannot sit solely in IT or legal. Effective governance requires:

- Executive sponsorship. Governance must have leadership support.

- Cross-functional representation. Legal, compliance, risk, technology, business units.

- Clear decision rights. Who approves high-risk AI systems? Who escalates issues?

4.3 Establish Risk-Based Processes

Not every AI system needs the same level of scrutiny. Design processes that scale:

- Tiered approval. Low-risk systems may use self-assessment; high-risk systems require committee review.

- Standardized documentation. Templates for model cards, validation reports, and monitoring dashboards.

- Automated compliance checks. Where possible, automate governance checks to reduce friction.

4.4 Embed Governance into Development Workflows

Governance should not be a gate at the end of development—it should be embedded in the development lifecycle:

- Requirements. Governance requirements defined before development begins.

- Design reviews. Architecture and data choices reviewed for compliance.

- Testing. Fairness, explainability, and robustness testing integrated into CI/CD pipelines.

- Deployment gates. Approval required before production release.

4.5 Monitor Continuously

Deployment is not the end of governance. Ongoing monitoring includes:

- Performance drift. Is model accuracy degrading?

- Data drift. Is input data distribution changing?

- Fairness drift. Are disparate impacts emerging?

- Incident tracking. How are errors or failures captured and addressed?

4.6 Build a Culture of Responsible AI

Governance is not just about processes—it is about culture. Organizations should:

- Train employees. Data scientists, engineers, and business leaders need to understand governance requirements.

- Reward responsible practices. Incentivize transparency, testing, and compliance.

- Create psychological safety. Encourage reporting of issues without fear of blame.

Section 5: Challenges in AI Governance

5.1 Balancing Innovation and Control

Overly rigid governance can stifle innovation. Under-governed AI creates unacceptable risk. The challenge is designing governance that is scalable, proportionate, and agile.

5.2 Keeping Pace with Technology

AI evolves rapidly. New architectures, techniques, and use cases emerge constantly. Governance frameworks must be adaptable—principles-based rather than overly prescriptive.

5.3 Managing Shadow AI

Business units often deploy AI without involving IT or governance. This “shadow AI” creates significant risk. Organizations need mechanisms to discover, assess, and govern AI across the enterprise.

5.4 Vendor AI Governance

Many organizations use third-party AI systems—embedded in SaaS products, APIs, or outsourced models. Governance must extend to vendors, requiring transparency, auditability, and compliance.

5.5 Cross-Border Complexity

Organizations operating globally face multiple regulatory regimes. Governance frameworks must accommodate the highest applicable standards across jurisdictions.

Section 6: How MHTECHIN Helps with AI Governance

Implementing AI governance requires expertise in regulation, risk management, and AI technology. MHTECHIN helps enterprises design and implement governance frameworks that enable responsible innovation.

6.1 For Governance Strategy

MHTECHIN helps organizations:

- Assess current state. Inventory AI systems; evaluate gaps; benchmark against regulations.

- Define governance structure. Roles, responsibilities, decision rights.

- Develop policies. Acceptable use, risk classification, development standards.

- Establish processes. Approval workflows, documentation requirements, monitoring protocols.

6.2 For Regulatory Compliance

MHTECHIN helps organizations navigate the complex regulatory landscape:

- EU AI Act compliance. Risk classification, documentation, technical standards.

- GDPR and right to explanation. Explainability implementation, audit trails.

- Sector-specific requirements. Financial services model risk management, healthcare AI validation.

6.3 For Technical Implementation

Governance requires technical capabilities:

- Model inventory. Tools to catalog and track AI systems.

- Explainability. SHAP, LIME, and other techniques for transparency.

- Fairness testing. Bias detection and mitigation.

- Monitoring. Drift detection, performance tracking, alerting.

MHTECHIN implements these technical capabilities, integrating them into development workflows.

6.4 For Training and Culture

MHTECHIN trains teams on responsible AI:

- Governance fundamentals. Policies, processes, roles.

- Technical best practices. Explainability, fairness, robustness.

- Incident response. How to identify, report, and remediate issues.

6.5 The MHTECHIN Approach

MHTECHIN’s AI governance practice combines regulatory expertise with technical depth. The team understands that governance is not a one-time project—it is an ongoing capability that must scale with AI adoption. For enterprises serious about responsible AI, MHTECHIN provides the expertise to build governance that enables innovation while managing risk.

Section 7: Frequently Asked Questions

7.1 Q: What is AI governance in simple terms?

A: AI governance is the framework of policies, processes, and controls that ensure AI systems are developed and deployed responsibly, ethically, and in compliance with regulations. It is about making sure AI does what it should—and not what it should not.

7.2 Q: Why do enterprises need AI governance?

A: Enterprises need AI governance to manage risk, comply with regulations, build trust, and ensure accountability. Without governance, organizations face regulatory fines, reputational damage, operational failures, and legal liability.

7.3 Q: What are the key components of an AI governance framework?

A: Key components include governance structure (roles and responsibilities), policies, risk classification, lifecycle governance, documentation, human oversight, and monitoring.

7.4 Q: How does the EU AI Act affect AI governance?

A: The EU AI Act imposes binding requirements on high-risk AI systems, including risk management, data quality, documentation, transparency, human oversight, and post-market monitoring. Organizations deploying AI in the EU must comply.

7.5 Q: Who should be responsible for AI governance?

A: AI governance requires cross-functional collaboration. The board provides oversight. An AI governance committee reviews high-risk use cases. A Chief AI Officer or AI Ethics Officer leads strategy. Model risk management validates models. Business owners are accountable for outcomes.

7.6 Q: What is the difference between AI governance and AI ethics?

A: AI ethics defines the principles—fairness, transparency, accountability. AI governance operationalizes those principles through policies, processes, and controls. Ethics is the “what”; governance is the “how.”

7.7 Q: How do you classify AI risk?

A: Common frameworks classify AI by risk level: prohibited (unacceptable risk), high risk (significant impact on safety or rights), limited risk (transparency required), and minimal risk (low impact). Classification determines governance requirements.

7.8 Q: What is shadow AI and why is it a problem?

A: Shadow AI refers to AI systems deployed without IT or governance oversight. It creates significant risk because these systems may not comply with regulations, may be undocumented, and may not be monitored for performance or bias.

7.9 Q: How does AI governance work with third-party AI vendors?

A: Organizations must extend governance to third-party AI systems. This includes vendor due diligence, contractual requirements for transparency and auditability, and ongoing monitoring of vendor AI performance and compliance.

7.10 Q: How does MHTECHIN help with AI governance?

A: MHTECHIN helps enterprises design and implement AI governance frameworks—strategy, policy, processes, technical implementation, and training. We provide expertise in regulation, risk management, and AI technology to enable responsible innovation at scale.

Section 8: Conclusion—Governing AI for the Long Term

AI is no longer experimental. It is embedded in the core operations of enterprises across every industry. But with scale comes risk. A single AI failure can trigger regulatory fines, reputational damage, and operational disruption.

AI governance is not about slowing innovation. It is about enabling innovation that is responsible, transparent, and sustainable. Organizations with strong governance can move faster because they have clear processes, documented controls, and stakeholder confidence. They are not constantly firefighting AI failures.

For enterprises serious about AI, governance is not optional. It is a competitive imperative. The organizations that succeed in the AI era will be those that balance ambition with accountability—pursuing the benefits of AI while managing the risks.

Ready to build AI governance that enables responsible innovation? Explore MHTECHIN’s AI governance services at www.mhtechin.com. From strategy through implementation, our team helps you govern AI for the long term.

This guide is brought to you by MHTECHIN—helping enterprises build AI governance frameworks that balance innovation with accountability. For personalized guidance on AI governance strategy or implementation, reach out to the MHTECHIN team today.

Leave a Reply