Introduction

Imagine a warehouse where robots don’t just follow pre-programmed paths but actively coordinate with each other, adapt to changing inventory, predict maintenance needs, and even negotiate with human workers about task priorities. Imagine manufacturing lines where robotic arms learn new assembly tasks by watching demonstrations, then optimize their movements for speed and precision. Imagine service robots that understand natural language instructions, navigate complex environments, and collaborate seamlessly with humans.

This is the reality of agentic AI in robotics in 2026. The convergence of large language models, multi-agent systems, and advanced robotics is creating a new generation of autonomous machines that can perceive, reason, plan, and act in the physical world—bridging the gap between digital intelligence and physical action.

According to recent industry data, the global market for AI-powered robotics is projected to reach $80 billion by 2028, with agentic architectures driving the next wave of innovation. From manufacturing and logistics to healthcare and service industries, autonomous robots are moving beyond isolated automation to become collaborative, adaptive team members.

In this comprehensive guide, you’ll learn:

- How agentic AI transforms robotics from programmed machines to autonomous agents

- The architecture of agentic robots—from perception to action

- Real-world applications across industries

- How multi-robot systems coordinate and collaborate

- The role of foundation models in robotic reasoning

- Safety, ethics, and human-robot collaboration

Part 1: The Evolution of Robotics

From Programmed Machines to Autonomous Agents

Figure 1: The evolution of robotics – from programmed machines to autonomous agents

| Era | Characteristics | Capabilities | Limitations |

|---|---|---|---|

| Industrial Robots | Pre-programmed, repetitive | High speed, precision | No adaptability |

| Collaborative Robots | Safe human interaction | Force sensing, safety features | Limited reasoning |

| AI-Enabled Robots | Computer vision, ML models | Object recognition, basic learning | Narrow capabilities |

| Agentic Robots | LLM reasoning, multi-agent coordination | Planning, adaptation, collaboration | Emerging technology |

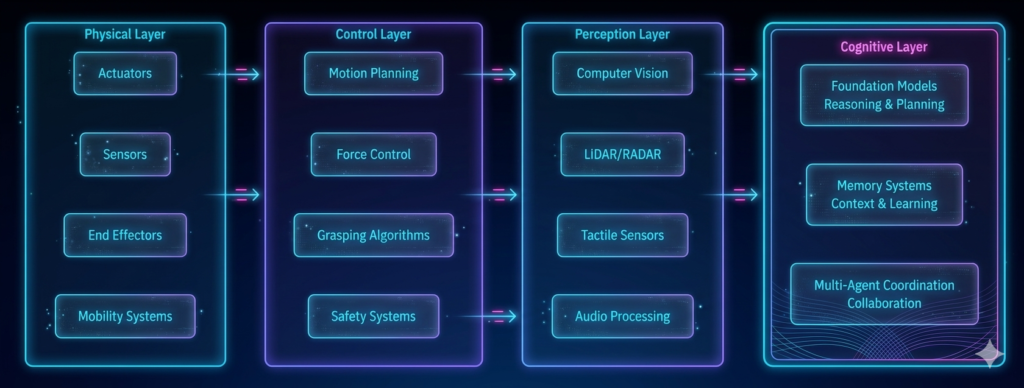

The Agentic Robotics Stack

Part 2: The Architecture of Agentic Robots

Core Capabilities

| Capability | Description | AI Component |

|---|---|---|

| Perception | Understanding environment through sensors | Computer vision, sensor fusion, LLM interpretation |

| Reasoning | Making decisions about actions | LLM-based planning, hierarchical task networks |

| Memory | Storing experiences and learning | Vector databases, episodic memory |

| Action | Executing physical movements | Motion planning, control algorithms |

| Coordination | Working with other agents | Multi-agent communication protocols |

| Adaptation | Learning from outcomes | Reinforcement learning, feedback loops |

The Agentic Robot Loop

python

class AgenticRobot:

"""Core loop for agentic robot control."""

def __init__(self, robot_hardware, llm_model):

self.hardware = robot_hardware

self.llm = llm_model

self.memory = MemorySystem()

self.world_model = WorldModel()

def run_loop(self):

"""Main agentic loop for robot."""

while True:

# 1. PERCEIVE - Gather sensor data

perception = self._perceive()

# 2. UNDERSTAND - Interpret environment

understanding = self._understand(perception)

# 3. REASON - Plan actions

plan = self._reason(understanding)

# 4. ACT - Execute physical actions

results = self._act(plan)

# 5. LEARN - Update from outcomes

self._learn(results)

# 6. COORDINATE - Communicate with other agents

self._coordinate()

def _perceive(self) -> dict:

"""Gather and fuse sensor data."""

return {

"vision": self.hardware.camera.get_frame(),

"lidar": self.hardware.lidar.get_point_cloud(),

"force": self.hardware.force_sensor.get_readings(),

"proprioception": self.hardware.get_joint_states()

}

def _understand(self, perception: dict) -> dict:

"""Interpret sensor data into semantic understanding."""

prompt = f"""

Analyze this robot perception:

Visual: {self._describe_visual(perception['vision'])}

Objects detected: {perception.get('objects', [])}

Current state: {self._get_robot_state()}

Task context: {self.memory.get_current_task()}

Return:

- Scene understanding

- Object relationships

- Obstacles and hazards

- Current progress

"""

understanding = self.llm.generate(prompt)

return json.loads(understanding)

def _reason(self, understanding: dict) -> list:

"""Plan sequence of physical actions."""

prompt = f"""

Based on this understanding, plan the next actions:

Understanding: {understanding}

Available actions: {self.hardware.get_available_actions()}

Task goal: {self.memory.get_goal()}

Return JSON list of actions with parameters.

"""

plan = self.llm.generate(prompt)

return json.loads(plan)

def _act(self, plan: list) -> dict:

"""Execute planned physical actions."""

results = []

for action in plan:

if action["type"] == "move_to":

result = self.hardware.move_to(

target=action["position"],

speed=action.get("speed", 0.5)

)

elif action["type"] == "grasp":

result = self.hardware.grasp(

object_id=action["object"],

force=action.get("force", 0.5)

)

elif action["type"] == "place":

result = self.hardware.place(

location=action["location"]

)

results.append({

"action": action,

"result": result,

"success": result.get("success", False)

})

return {"actions": results, "overall_success": all(r["success"] for r in results)}

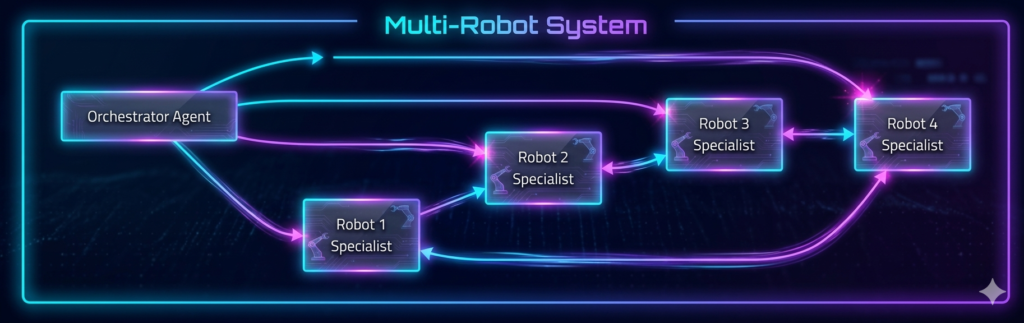

Part 3: Multi-Robot Agent Systems

Robot Swarms and Teams

*Figure 2: Multi-robot agent coordination architecture*

Robot Coordination Patterns

| Pattern | Description | Example | Use Case |

|---|---|---|---|

| Leader-Follower | One robot directs others | Warehouse lead robot coordinates pickers | Logistics |

| Swarm | Decentralized, emergent behavior | Drone swarm for search and rescue | Exploration |

| Hierarchical | Layered decision-making | Factory line with supervisory robot | Manufacturing |

| Collaborative | Equal partners sharing tasks | Two robots assembling large object | Assembly |

Implementation: Multi-Robot Coordination

python

class MultiRobotCoordinator:

"""Coordinate multiple robots as agent team."""

def __init__(self):

self.robots = {}

self.communication = RobotCommunicationNetwork()

self.task_allocator = TaskAllocator()

def add_robot(self, robot_id, capabilities):

"""Register robot with system."""

self.robots[robot_id] = {

"id": robot_id,

"capabilities": capabilities,

"status": "idle",

"position": None,

"battery": 100

}

def assign_task(self, task):

"""Assign task to appropriate robot(s)."""

# Analyze task requirements

requirements = self._analyze_task(task)

# Find capable robots

capable_robots = []

for robot in self.robots.values():

if self._can_perform(robot, requirements):

capable_robots.append(robot)

# Allocate task

if len(capable_robots) == 1:

return self._assign_single(capable_robots[0], task)

else:

return self._assign_team(capable_robots, task)

def _assign_team(self, robots, task):

"""Assign task to multiple robots."""

# Decompose task into subtasks

subtasks = self._decompose_task(task)

# Allocate subtasks to robots

assignments = {}

for i, subtask in enumerate(subtasks):

robot = robots[i % len(robots)]

assignments[robot["id"]] = assignments.get(robot["id"], []) + [subtask]

# Send coordination messages

for robot_id, subtasks in assignments.items():

self.communication.send(robot_id, {

"type": "team_assignment",

"subtasks": subtasks,

"coordinator": True

})

return assignments

def handle_conflict(self, conflict):

"""Resolve conflicts between robots."""

prompt = f"""

Resolve this robot conflict:

Robots involved: {conflict['robots']}

Conflict type: {conflict['type']}

Resources: {conflict['resources']}

Return resolution strategy.

"""

resolution = llm.generate(prompt)

return json.loads(resolution)

Part 4: Real-World Applications

Application 1: Autonomous Warehousing

| Task | Traditional Approach | Agentic Approach |

|---|---|---|

| Navigation | Pre-programmed paths | Dynamic path planning with real-time adaptation |

| Picking | Barcode scanning | Vision-based object recognition, adaptive grasping |

| Inventory | Scheduled counts | Continuous monitoring, predictive replenishment |

| Coordination | Centralized control | Distributed negotiation between robots |

| Maintenance | Scheduled service | Predictive maintenance based on usage patterns |

Case Study: A major e-commerce warehouse deployed agentic robots that reduced picking time by 40%, increased storage density by 25%, and achieved 99.5% order accuracy.

Application 2: Manufacturing and Assembly

python

class ManufacturingAgent:

"""Agentic robot for flexible manufacturing."""

def __init__(self, assembly_cell):

self.cell = assembly_cell

self.skill_library = self._load_skills()

def learn_assembly(self, demonstration):

"""Learn new assembly task from demonstration."""

# Watch demonstration

trajectory = self._capture_demonstration(demonstration)

# Extract key steps

steps = self._extract_steps(trajectory)

# Generate skill program

skill_program = self._generate_skill(steps)

# Simulate and validate

validated = self._validate_skill(skill_program)

# Add to skill library

self.skill_library.append(validated)

return validated

def execute_assembly(self, task_spec):

"""Execute assembly task with adaptation."""

# Retrieve relevant skills

skills = self._select_skills(task_spec)

# Plan execution sequence

plan = self._plan_sequence(skills, task_spec)

# Execute with feedback

results = []

for step in plan:

result = self._execute_step(step)

results.append(result)

# Adapt if needed

if not result["success"]:

adapted = self._adapt_plan(step, result)

result = self._execute_step(adapted)

return {"success": all(r["success"] for r in results)}

Application 3: Healthcare and Service Robotics

| Application | Agentic Capabilities | Impact |

|---|---|---|

| Surgical Assistance | Adaptive instrument control, tissue recognition | Reduced complication rates |

| Patient Monitoring | Vital sign tracking, fall detection, communication | Improved response times |

| Rehabilitation | Personalized exercise coaching, progress tracking | Better patient outcomes |

| Hospital Logistics | Autonomous delivery, navigation, coordination | Reduced staff workload |

Application 4: Search and Rescue

python

class SearchRescueSwarm:

"""Multi-robot swarm for search and rescue."""

def __init__(self):

self.drones = []

self.ground_robots = []

self.communications = MeshNetwork()

def deploy(self, search_area):

"""Deploy swarm for search mission."""

# Divide area into zones

zones = self._partition_area(search_area)

# Assign robots to zones

assignments = {}

for i, zone in enumerate(zones):

robot = self._select_robot(zone)

assignments[robot.id] = zone

# Deploy with coordination

for robot, zone in assignments.items():

robot.deploy(zone, {

"coverage_pattern": "lawnmower",

"altitude": 30 if isinstance(robot, Drone) else 0,

"communication_relay": self._get_relay_robot()

})

def detect_victim(self, robot_id, location, sensor_data):

"""Handle victim detection."""

# Verify detection

verified = self._verify_detection(sensor_data)

if verified:

# Mark location

self._mark_location(location)

# Redirect nearby robots

self._redirect_robots(location)

# Notify command center

self._notify_center({

"type": "victim_found",

"location": location,

"confidence": verified["confidence"]

})

def coordinate_rescue(self, victims):

"""Coordinate multi-robot rescue operations."""

# Prioritize victims

priorities = self._prioritize_victims(victims)

# Assign rescue resources

for victim in priorities:

# Find closest robot with rescue capability

robot = self._find_closest_rescue_robot(victim.location)

# Guide robot to victim

robot.navigate_to(victim.location)

# Provide medical guidance

robot.provide_assistance(victim.condition)

Part 5: Foundation Models for Robotics

LLMs as Robotic Brains

Large language models are becoming the cognitive core for agentic robots, enabling:

| Capability | How LLMs Enable It |

|---|---|

| Natural Language Instruction | Understand complex commands like “pick up the red cube and place it next to the blue box” |

| Task Decomposition | Break “clean the room” into “pick up objects, vacuum floor, organize furniture” |

| Common Sense Reasoning | Know that a cup should be placed upright, not upside down |

| Error Recovery | Understand why a grasp failed and try alternative approach |

| Human-Robot Communication | Explain actions, ask clarifying questions |

Vision-Language-Action Models

python

class VisionLanguageActionModel:

"""Multimodal model for robotic control."""

def __init__(self):

self.vision_encoder = CLIPVisionModel()

self.language_encoder = LLM()

self.action_decoder = DiffusionPolicy()

def predict_action(self, image, instruction):

"""Predict next action from visual and language input."""

# Encode image

visual_features = self.vision_encoder(image)

# Encode instruction

language_features = self.language_encoder.encode(instruction)

# Combine modalities

combined = self._fuse_features(visual_features, language_features)

# Decode action

action = self.action_decoder(combined)

return {

"type": action["type"],

"parameters": action["params"],

"confidence": action["confidence"]

}

def learn_from_demonstration(self, demonstrations):

"""Fine-tune model on robot demonstrations."""

for demo in demonstrations:

for step in demo.steps:

# Store demonstration

self._store_demonstration(step.image, step.instruction, step.action)

# Update action decoder

self.action_decoder.fine_tune(self.demonstration_dataset)

Part 6: Safety and Human-Robot Collaboration

Safety Framework for Agentic Robots

Safety Implementation

python

class RobotSafetySystem:

"""Multi-layer safety for agentic robots."""

def __init__(self, robot):

self.robot = robot

self.emergency_stop = EmergencyStop()

self.collision_avoidance = CollisionDetector()

self.risk_assessor = RiskAssessor()

def validate_action(self, action):

"""Validate action before execution."""

# Check hardware limits

if not self._within_limits(action):

return False, "Hardware limit exceeded"

# Check collision risk

if self.collision_avoidance.would_collide(action):

return False, "Collision risk detected"

# Assess risk level

risk = self.risk_assessor.assess(action)

if risk > 0.8:

return False, f"Unacceptable risk level: {risk}"

return True, "Action validated"

def monitor_operation(self):

"""Continuous safety monitoring."""

while self.robot.operating:

# Check for human presence

if self._human_too_close():

self.robot.reduce_speed()

# Check for anomalies

if self._detect_anomaly():

self.emergency_stop.activate()

# Check system health

if self._system_degraded():

self.robot.enter_safe_mode()

Human-Robot Collaboration Patterns

| Pattern | Description | Example |

|---|---|---|

| Co-Working | Humans and robots share space safely | Assembly line with collaborative robots |

| Sequential | Handoff between human and robot | Robot prepares parts, human assembles |

| Assisted | Robot augments human capability | Exoskeleton, surgical assistance |

| Supervised | Human monitors multiple robots | Warehouse control room |

| Collaborative | Joint problem-solving | Robot and human co-design |

Part 7: MHTECHIN’s Expertise in Agentic Robotics

At MHTECHIN, we specialize in building agentic robotic systems that bridge digital intelligence and physical action. Our expertise includes:

- Autonomous Robot Development: Custom agentic robots for manufacturing, logistics, and service

- Multi-Robot Coordination: Swarm intelligence, task allocation, conflict resolution

- Foundation Model Integration: LLM-based reasoning for robotic control

- Safety Systems: Multi-layer safety, human-robot collaboration

- Simulation to Reality: Transfer learning from simulation to physical robots

MHTECHIN helps organizations deploy intelligent, autonomous robots that work safely alongside humans.

Conclusion

Agentic AI is transforming robotics from programmed machines to autonomous agents capable of reasoning, planning, and adapting. By bridging digital intelligence with physical action, agentic robots are unlocking new capabilities across industries.

Key Takeaways:

- Agentic robots perceive, reason, plan, and act in physical environments

- Multi-robot systems coordinate through distributed intelligence

- Foundation models provide reasoning, common sense, and natural language understanding

- Safety frameworks are essential for human-robot collaboration

- Real-world applications span manufacturing, logistics, healthcare, and search and rescue

The future of robotics is agentic—machines that don’t just follow programs but understand goals, adapt to situations, and collaborate with humans as teammates.

Frequently Asked Questions (FAQ)

Q1: What is agentic AI in robotics?

Agentic AI in robotics refers to robots that use AI agents for perception, reasoning, planning, and action—enabling them to operate autonomously, adapt to new situations, and collaborate with other agents .

Q2: How do agentic robots differ from traditional robots?

Traditional robots follow pre-programmed instructions. Agentic robots perceive their environment, reason about goals, plan actions, and learn from outcomes .

Q3: Can agentic robots work with humans safely?

Yes. Modern agentic robots incorporate multi-layer safety systems, force limiting, collision detection, and risk assessment to enable safe human-robot collaboration .

Q4: How do multiple robots coordinate?

Multi-robot systems use communication protocols, task allocation algorithms, and distributed reasoning to coordinate actions without central control .

Q5: What role do LLMs play in robotics?

LLMs provide common sense reasoning, natural language understanding, task decomposition, and error recovery—acting as the cognitive layer for robots .

Q6: Can robots learn new tasks?

Yes. Agentic robots can learn from demonstration, simulation, reinforcement learning, and human feedback to acquire new skills .

Q7: What industries are adopting agentic robotics?

Manufacturing, logistics, healthcare, agriculture, search and rescue, and service industries are leading adopters .

Q8: How do I get started with agentic robotics?

Start with simulation environments like Gazebo or NVIDIA Isaac Sim, integrate foundation models for reasoning, and gradually deploy to physical robots with safety systems .

Leave a Reply