Introduction

Imagine a development team where AI agents don’t just suggest code—they write it, test it, debug it, review it, and deploy it. Where a developer describes a feature in natural language, and a coordinated team of specialized agents plans the architecture, writes the implementation, creates tests, identifies bugs, and prepares the pull request. This isn’t science fiction. It’s the reality of agentic AI for software development in 2026.

The software development landscape is undergoing a seismic shift. According to recent industry data, AI-assisted development has increased developer productivity by 30-50%, with autonomous agents now handling tasks that previously required entire teams. Tools like Cursor 2.0 run up to 8 parallel coding agents, Claude Code enables 10+ simultaneous instances for coordinated development, and enterprises are deploying multi-agent systems that write, review, and deploy code with minimal human oversight.

In this comprehensive guide, you’ll learn:

- How agentic AI is transforming every phase of the software development lifecycle

- The architecture of coding agents—from planning to execution to review

- Real-world implementation patterns with frameworks like LangGraph, AutoGen, and CrewAI

- Best practices for integrating AI agents into existing development workflows

- Security, quality, and governance considerations for autonomous coding

Part 1: The Evolution of AI in Software Development

From Autocomplete to Autonomous Development

Figure 1: The evolution of AI in software development – from autocomplete to autonomous agents

| Era | Capability | Human Role | Tools |

|---|---|---|---|

| Autocomplete | Single-line suggestions | Developer writes most code | TabNine, Kite |

| Code Generation | Function-level generation | Developer prompts, reviews | GitHub Copilot, ChatGPT |

| Agent Assistance | Multi-step workflows, debugging | Developer orchestrates | Cursor, Windsurf, Claude Code |

| Autonomous Agents | End-to-end feature development | Developer specifies intent | Multi-agent systems, AutoGen |

The Productivity Impact

| Metric | Without AI | With AI Assistance | With Agentic AI |

|---|---|---|---|

| Feature Development Time | 5-10 days | 2-4 days | 1-2 days |

| Bug Detection Rate | 60-70% | 80-85% | 90-95% |

| Code Review Time | 2-4 hours | 1-2 hours | 15-30 minutes |

| Developer Satisfaction | Baseline | +30% | +50% |

Part 2: The Architecture of Coding Agents

The Multi-Agent Development Team

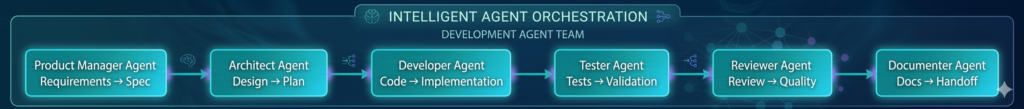

Modern agentic development systems use a coordinated team of specialized agents:

*Figure 2: Multi-agent architecture for autonomous software development*

Agent Roles and Responsibilities

| Agent | Role | Key Functions | Outputs |

|---|---|---|---|

| Product Manager | Requirements analysis | Parse specifications, identify edge cases | User stories, acceptance criteria |

| Architect | System design | Plan structure, select patterns, define interfaces | Architecture diagram, component spec |

| Developer | Code implementation | Write code, refactor, optimize | Source code, unit tests |

| Tester | Quality assurance | Write tests, execute, report bugs | Test suite, bug reports |

| Reviewer | Code review | Analyze code quality, suggest improvements | Review comments, quality score |

| Documenter | Documentation | Generate docs, update README | API docs, user guides |

Part 3: Implementation Patterns

Pattern 1: The Planner-Developer Loop

python

from langgraph.graph import StateGraph, END

from typing import TypedDict, List

class DevelopmentState(TypedDict):

requirement: str

plan: List[str]

current_step: int

code: str

test_results: str

status: str

# Planner agent creates implementation plan

def planner(state: DevelopmentState):

prompt = f"""

Create a detailed implementation plan for: {state['requirement']}

Break it down into sequential steps.

"""

plan = llm.generate(prompt).split("\n")

return {"plan": plan, "current_step": 0}

# Developer agent implements each step

def developer(state: DevelopmentState):

step = state['plan'][state['current_step']]

prompt = f"""

Implement this step: {step}

Based on requirement: {state['requirement']}

Existing code: {state.get('code', '')}

"""

code = llm.generate(prompt)

return {"code": state.get('code', '') + "\n" + code}

# Tester agent validates implementation

def tester(state: DevelopmentState):

prompt = f"""

Write and execute tests for this code:

{state['code']}

"""

test_results = llm.generate(prompt)

return {"test_results": test_results}

# Orchestration

workflow = StateGraph(DevelopmentState)

workflow.add_node("planner", planner)

workflow.add_node("developer", developer)

workflow.add_node("tester", tester)

workflow.set_entry_point("planner")

workflow.add_edge("planner", "developer")

def should_continue(state):

if state['test_results'].get('passing', False):

return "reviewer"

else:

return "developer" # Iterate until tests pass

workflow.add_conditional_edges("tester", should_continue)

Pattern 2: Parallel Code Generation with Cursor-Style Agents

Modern tools like Cursor 2.0 use parallel agent execution:

python

class ParallelCodingAgents:

"""Run multiple coding agents in parallel for complex features."""

def __init__(self, num_agents=8):

self.num_agents = num_agents

self.agents = [self._create_agent() for _ in range(num_agents)]

def implement_feature(self, specification: str) -> dict:

"""Distribute implementation across parallel agents."""

# Break spec into independent modules

modules = self._decompose_spec(specification)

# Run agents in parallel

with ThreadPoolExecutor(max_workers=self.num_agents) as executor:

futures = []

for i, module in enumerate(modules):

futures.append(executor.submit(

self.agents[i % self.num_agents].implement,

module

))

# Collect results

results = [f.result() for f in futures]

# Merge and integrate

integrated_code = self._merge_results(results)

# Run integration tests

test_results = self._run_integration_tests(integrated_code)

return {

"code": integrated_code,

"modules": results,

"tests": test_results

}

def _decompose_spec(self, specification: str) -> list:

"""Break specification into parallelizable modules."""

# Use architect agent to identify independent components

prompt = f"""

Decompose this specification into independent modules that can be developed in parallel:

{specification}

Return as JSON list with module names and responsibilities.

"""

return llm.generate_json(prompt)

Pattern 3: Review-Critique-Revise Loop

The “Editor + Critic” pattern improves code quality through iteration:

python

class ReviewCritiqueRevise:

"""Iterative code improvement through review and revision."""

def __init__(self, max_iterations=5):

self.max_iterations = max_iterations

def develop(self, requirement: str) -> dict:

"""Develop code with iterative refinement."""

code = None

revision_history = []

for i in range(self.max_iterations):

# Generate or revise code

if i == 0:

code = self._generate_initial_code(requirement)

else:

code = self._revise_code(code, critiques)

# Review code

review = self._review_code(code)

critiques = review.get("critiques", [])

quality_score = review.get("score", 0)

revision_history.append({

"iteration": i,

"code": code,

"review": review

})

# Check if done

if quality_score >= 0.9 or not critiques:

break

return {

"final_code": code,

"revision_history": revision_history,

"quality_score": quality_score

}

def _generate_initial_code(self, requirement: str) -> str:

"""Generate initial implementation."""

prompt = f"Implement: {requirement}"

return llm.generate(prompt)

def _review_code(self, code: str) -> dict:

"""Review code for quality, bugs, and style."""

prompt = f"""

Review this code for:

1. Correctness - does it meet requirements?

2. Style - follows best practices?

3. Edge cases - handles all scenarios?

4. Performance - efficient?

Code:

{code}

Return JSON with score (0-1) and list of critiques.

"""

return llm.generate_json(prompt)

def _revise_code(self, code: str, critiques: list) -> str:

"""Revise code based on critiques."""

prompt = f"""

Revise this code based on critiques:

Original code:

{code}

Critiques:

{critiques}

Return improved code.

"""

return llm.generate(prompt)

Part 4: Real-World Implementation Examples

Example 1: Autonomous Feature Development with AutoGen

python

from autogen import AssistantAgent, UserProxyAgent, GroupChat, GroupChatManager

import autogen

# Configure LLM

llm_config = {

"model": "gpt-4o",

"temperature": 0.2,

}

# Create specialized agents

product_manager = AssistantAgent(

name="ProductManager",

system_message="You analyze requirements and create detailed specifications.",

llm_config=llm_config

)

architect = AssistantAgent(

name="Architect",

system_message="You design system architecture and create implementation plans.",

llm_config=llm_config

)

developer = AssistantAgent(

name="Developer",

system_message="You write high-quality, tested Python code.",

llm_config=llm_config,

code_execution_config={"work_dir": "coding", "use_docker": False}

)

reviewer = AssistantAgent(

name="Reviewer",

system_message="You review code for quality, security, and best practices.",

llm_config=llm_config

)

# User proxy for execution

user_proxy = UserProxyAgent(

name="User",

code_execution_config={"work_dir": "coding", "use_docker": False},

human_input_mode="TERMINATE"

)

# Create group chat

groupchat = GroupChat(

agents=[product_manager, architect, developer, reviewer, user_proxy],

messages=[],

max_round=15

)

manager = GroupChatManager(groupchat=groupchat, llm_config=llm_config)

# Start development

task = """

Develop a REST API endpoint for user authentication with:

- Email/password login

- JWT token generation

- Password reset functionality

- Rate limiting (5 attempts per 15 minutes)

- Input validation and sanitization

- Unit tests with pytest

"""

response = user_proxy.initiate_chat(

manager,

message=f"Develop this feature:\n{task}"

)

Example 2: Bug Fixing Agent with LangGraph

python

from langgraph.graph import StateGraph, END

class BugFixState(TypedDict):

bug_report: str

codebase: str

diagnosis: str

fix_proposal: str

fix_implementation: str

verification: str

status: str

def diagnoser(state: BugFixState):

"""Analyze bug report and codebase to identify root cause."""

prompt = f"""

Analyze this bug report against the codebase:

Bug Report: {state['bug_report']}

Codebase: {state['codebase']}

Identify root cause and affected components.

"""

diagnosis = llm.generate(prompt)

return {"diagnosis": diagnosis}

def fix_proposer(state: BugFixState):

"""Propose fix based on diagnosis."""

prompt = f"""

Based on this diagnosis:

{state['diagnosis']}

Propose a fix that addresses the root cause without introducing new issues.

"""

proposal = llm.generate(prompt)

return {"fix_proposal": proposal}

def fix_implementer(state: BugFixState):

"""Implement the proposed fix."""

prompt = f"""

Implement this fix:

{state['fix_proposal']}

Original code: {state['codebase']}

Return the complete updated code.

"""

implementation = llm.generate(prompt)

return {"fix_implementation": implementation}

def verifier(state: BugFixState):

"""Verify fix addresses the bug."""

prompt = f"""

Verify that this fix addresses the original bug:

Bug: {state['bug_report']}

Fix: {state['fix_implementation']}

Check for:

1. Bug resolved?

2. No new issues introduced?

3. Edge cases handled?

"""

verification = llm.generate(prompt)

return {"verification": verification}

# Build workflow

workflow = StateGraph(BugFixState)

workflow.add_node("diagnoser", diagnoser)

workflow.add_node("proposer", fix_proposer)

workflow.add_node("implementer", fix_implementer)

workflow.add_node("verifier", verifier)

workflow.set_entry_point("diagnoser")

workflow.add_edge("diagnoser", "proposer")

workflow.add_edge("proposer", "implementer")

workflow.add_edge("implementer", "verifier")

def after_verification(state):

if "resolved" in state['verification'].lower():

return END

else:

return "diagnoser" # Re-diagnose if not fixed

workflow.add_conditional_edges("verifier", after_verification)

app = workflow.compile()

Example 3: Test Generation Agent

python

class TestGenerator:

"""Autonomous test generation and execution."""

def __init__(self, framework="pytest"):

self.framework = framework

def generate_tests(self, code: str, function_name: str = None) -> dict:

"""Generate comprehensive test suite for code."""

# Step 1: Analyze code to understand requirements

analysis = self._analyze_code(code)

# Step 2: Generate unit tests

unit_tests = self._generate_unit_tests(code, analysis)

# Step 3: Generate edge case tests

edge_tests = self._generate_edge_cases(code, analysis)

# Step 4: Generate integration tests

integration_tests = self._generate_integration_tests(code)

# Step 5: Execute tests

results = self._execute_tests(unit_tests + edge_tests + integration_tests)

return {

"tests": {

"unit": unit_tests,

"edge": edge_tests,

"integration": integration_tests

},

"results": results,

"coverage": results.get("coverage", 0),

"passing": results.get("passing", False)

}

def _analyze_code(self, code: str) -> dict:

"""Analyze code structure and dependencies."""

prompt = f"""

Analyze this code and return:

1. Input parameters and types

2. Expected outputs

3. Dependencies

4. Edge cases to test

5. Potential failure modes

Code:

{code}

"""

return llm.generate_json(prompt)

def _generate_unit_tests(self, code: str, analysis: dict) -> str:

"""Generate unit tests for core functionality."""

prompt = f"""

Generate {self.framework} unit tests for this code:

Code:

{code}

Analysis:

{analysis}

Include:

- Happy path tests

- Parameter validation tests

- Mock external dependencies

"""

return llm.generate(prompt)

def _generate_edge_cases(self, code: str, analysis: dict) -> str:

"""Generate tests for edge cases."""

prompt = f"""

Generate tests for these edge cases:

{analysis.get('edge_cases', [])}

Code:

{code}

"""

return llm.generate(prompt)

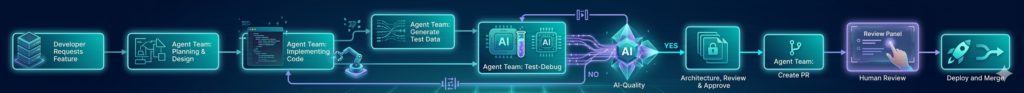

Part 5: Development Workflows with Agentic AI

The Modern Developer’s Workflow

Integration with Development Tools

| Tool | Integration | Purpose |

|---|---|---|

| GitHub | PR creation, review comments, issue tracking | Version control |

| Jira | Ticket creation, status updates, assignment | Project management |

| CI/CD | Pipeline triggering, test execution, deployment | Automation |

| Slack | Notifications, approvals, status updates | Communication |

| VS Code | In-editor agent assistance, code completion | Development |

Example: GitHub PR Agent

python

class GitHubPRAgent:

"""Agent that creates and manages pull requests."""

def __init__(self, repo_owner: str, repo_name: str, github_token: str):

self.repo = f"{repo_owner}/{repo_name}"

self.github = Github(github_token)

def create_pr(self, feature_description: str) -> dict:

"""Create a complete PR with code, tests, and description."""

# Step 1: Generate code and tests

code = self._generate_code(feature_description)

tests = self._generate_tests(code)

# Step 2: Create branch

branch_name = f"feature/agent-{uuid.uuid4().hex[:8]}"

self._create_branch(branch_name)

# Step 3: Commit changes

self._commit_files(branch_name, {

"src/feature.py": code,

"tests/test_feature.py": tests

})

# Step 4: Create PR

pr_title = f"Agent: {self._extract_title(feature_description)}"

pr_body = self._generate_pr_description(feature_description, code, tests)

pr = self.github.get_repo(self.repo).create_pull(

title=pr_title,

body=pr_body,

head=branch_name,

base="main"

)

# Step 5: Request reviewers

pr.create_review_request(reviewers=self._suggest_reviewers(code))

# Step 6: Add labels

pr.add_to_labels(["ai-generated", "needs-review"])

return {

"pr_url": pr.html_url,

"pr_number": pr.number,

"branch": branch_name

}

def _generate_pr_description(self, feature_description: str, code: str, tests: str) -> str:

"""Generate detailed PR description."""

prompt = f"""

Create a PR description for:

Feature: {feature_description}

Include:

1. Summary of changes

2. Testing performed

3. Screenshots (if UI)

4. Checklist

"""

return llm.generate(prompt)

Part 6: Quality and Security Considerations

Code Quality Metrics for AI-Generated Code

| Metric | Target | How to Enforce |

|---|---|---|

| Test Coverage | >80% | Automated coverage reporting |

| Linting Score | 10/10 | ESLint, Pylint in CI |

| Cyclomatic Complexity | <10 per function | Static analysis |

| Security Vulnerabilities | 0 critical | Snyk, Dependabot |

| Code Duplication | <5% | Duplication detection |

Security Best Practices for AI-Generated Code

python

class SecurityValidator:

"""Validate AI-generated code for security issues."""

def __init__(self):

self.vulnerability_patterns = {

"sql_injection": r"(?i)execute\(.*\$\{.*\}",

"hardcoded_secrets": r"(?i)(password|secret|token|key)\s*=\s*['\"][^'\"]+['\"]",

"command_injection": r"(?i)os\.system\(|subprocess\.call\(.*input",

"path_traversal": r"(?i)\.\.\/\.\.\/"

}

def validate(self, code: str) -> dict:

"""Validate code for security vulnerabilities."""

issues = []

for pattern_name, pattern in self.vulnerability_patterns.items():

if re.search(pattern, code):

issues.append({

"type": pattern_name,

"severity": "high",

"description": f"Potential {pattern_name} vulnerability detected"

})

# Check for safe import patterns

if "import pickle" in code and "untrusted" in code.lower():

issues.append({

"type": "insecure_deserialization",

"severity": "critical",

"description": "Pickle with untrusted data is dangerous"

})

return {

"valid": len(issues) == 0,

"issues": issues,

"risk_score": len([i for i in issues if i["severity"] == "critical"]) / max(1, len(issues))

}

Part 7: MHTECHIN’s Expertise in Agentic Development

At MHTECHIN, we specialize in building and deploying agentic AI systems for software development. Our expertise includes:

- Custom Agent Teams: Building specialized agent architectures for your development workflow

- Integration Services: Connecting AI agents to GitHub, Jira, CI/CD, and other tools

- Quality Assurance: Ensuring AI-generated code meets enterprise standards

- Security Validation: Preventing vulnerabilities in AI-generated code

- Developer Training: Helping teams work effectively with AI agents

MHTECHIN helps development teams leverage agentic AI to ship better code, faster, with higher quality and security.

Conclusion

Agentic AI is fundamentally transforming software development. What began as simple code autocompletion has evolved into autonomous teams of specialized agents that can plan, implement, test, review, and deploy features with minimal human oversight.

Key Takeaways:

- Multi-agent systems with specialized roles (Architect, Developer, Tester, Reviewer) outperform single-agent approaches

- Parallel execution (like Cursor’s 8 agents) dramatically reduces development time

- Iterative refinement through review-critique-revise loops improves code quality

- Integration with development tools (GitHub, Jira, CI/CD) creates seamless workflows

- Security and quality validation remain essential for AI-generated code

The developer’s role is evolving from code writer to code orchestrator. Those who embrace agentic AI will ship faster, with higher quality, while focusing on the strategic work that machines can’t do.

Frequently Asked Questions (FAQ)

Q1: What is agentic AI for software development?

Agentic AI for software development uses autonomous AI agents to perform coding tasks—from planning and design to implementation, testing, and deployment—with minimal human intervention .

Q2: How do multi-agent coding systems work?

They use specialized agents (Product Manager, Architect, Developer, Tester, Reviewer) that coordinate through structured workflows, each focusing on their expertise area .

Q3: What tools support agentic development?

Leading tools include Cursor 2.0 (8 parallel agents), Claude Code (10+ instances), AutoGen, LangGraph, and CrewAI for custom agent teams .

Q4: Can AI agents write production-ready code?

Yes, when combined with proper testing, review, and validation. AI-generated code can achieve high quality, but human review remains important for complex business logic .

Q5: How do I integrate AI agents with my existing workflow?

Use agents that integrate with GitHub (PR creation), Jira (ticket updates), CI/CD (pipeline triggers), and Slack (notifications) .

Q6: What are the security risks of AI-generated code?

Risks include hardcoded secrets, injection vulnerabilities, and insecure patterns. Implement security validation as a mandatory step before deployment .

Q7: How do I measure the impact of agentic AI?

Track metrics like feature development time (30-50% reduction), bug detection rate (10-20% improvement), and developer satisfaction .

Q8: Will AI agents replace developers?

No—they augment developers. The role shifts from writing code to orchestrating agents, reviewing outputs, and focusing on higher-level architecture and strategy .

Leave a Reply