Introduction

Imagine building a team of AI specialists. One handles research, another writes code, a third reviews outputs for quality, and a coordinator ensures everything runs smoothly. Now imagine you need to orchestrate this entire team—managing their conversations, tracking their state, handling failures, and ensuring they work together efficiently. This is exactly what orchestration frameworks for agentic AI do .

In the early days of AI, building agents meant writing long, complex prompts and hoping for the best. Today, sophisticated frameworks provide the infrastructure needed to build reliable, scalable, production-ready AI agents. As LangChain’s team noted in early 2026, “We’ve seen three generations of agents in three years: what started as RAG became agentic workflows, which evolved into more autonomous tool-calling-in-a-loop agents” .

The ecosystem has matured significantly. According to Databricks’ State of AI Agents report, multi-agent workflows grew by 327% between June and October 2025, with technology companies building multi-agent systems at 4× the rate of other industries . With over 126,000 GitHub stars across major frameworks, the orchestration layer has become as critical as the underlying models .

In this comprehensive guide, you’ll learn:

- The architecture and capabilities of LangChain, AutoGen (now part of Microsoft Agent Framework), and CrewAI

- How these frameworks compare on performance, cost, and production readiness

- Real-world use cases and implementation patterns

- Best practices for choosing the right framework for your needs

- How MHTECHIN leverages these frameworks for enterprise AI solutions

Part 1: The Evolution of Agentic Frameworks

From Prompts to Production Systems

The journey of AI agent frameworks reflects the maturing of the field. As the LangChain team explains, “The biggest knock against frameworks is that the AI space evolves too quickly for standards to form. There’s truth to that. But we also believe that sitting out of the AI game waiting for things to settle is a losing strategy. Frameworks help you dive in, build faster, and increase your odds of success” .

The Three Generations of Agent Frameworks:

Why Orchestration Matters

When building production AI systems, orchestration frameworks address several critical needs:

Part 2: Framework Deep-Dive – LangChain & LangGraph

Overview and Architecture

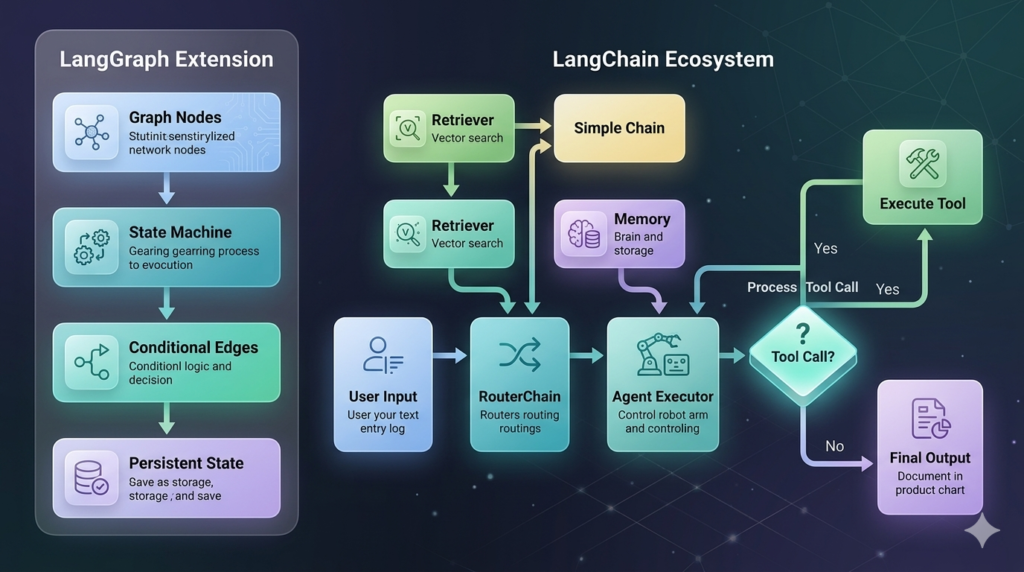

LangChain remains the most widely adopted agentic framework, with over 126,000 GitHub stars and 20,000 forks as of 2026 . It provides a comprehensive ecosystem for building LLM applications through modular components: chains, agents, memory, retrievers, and tools.

LangGraph, built on LangChain’s runtime, introduced a lower-level, more flexible architecture for stateful, multi-step agent systems. As LangChain’s documentation explains, “LangGraph was lower level and more flexible. It included a runtime that supported durability and statefulness, which turned out to be important for human-agent and agent-agent collaboration”.

Key Components

Performance Characteristics

According to benchmark tests across 2,000 runs, LangChain demonstrates distinct performance profiles:

In Task 2 (Comparative Revenue Analysis), LangChain was “the fastest and most cost-effective framework,” completing the task in 5-6 steps without detours: Load → Filter → Calculate → Filter → Calculate → Output .

The DeepAgents Evolution

In late 2025, LangChain introduced deepagents, a “batteries-included agent harness that’s more performant and more flexible. It supports planning for long-horizon tasks, tool-calling-in-a-loop, context offloading to a filesystem, and subagent orchestration” .

Key innovations in deepagents:

- Filesystem-based memory using Markdown and JSON files

- Subagent orchestration for complex task decomposition

- Planning capabilities for long-horizon tasks

- Model-agnostic design (similar to Claude Agent SDK but works with any LLM)

Part 3: Framework Deep-Dive – AutoGen and Microsoft Agent Framework

The AutoGen Legacy

AutoGen was introduced by Microsoft Research in late 2023 and quickly became the default choice for multi-agent systems. Its revolutionary insight was simple yet powerful: treat agents as participants in a conversation, not just links in a chain .

The classic AutoGen pattern:

python

from autogen import AssistantAgent, UserProxyAgent

assistant = AssistantAgent(name="assistant", llm_config=llm_config)

user_proxy = UserProxyAgent(name="user", code_execution_config={"work_dir": "coding"})

user_proxy.initiate_chat(assistant, message="Write a Python class for data analysis...")

This minimal code creates a complete loop: planning, code execution, error retry, and termination—all without a central controller .

Architecture of AutoGen v0.4

AutoGen v0.4 (released in early 2025) introduced a significant redesign with three layers:

Group Chat – The Signature Pattern

AutoGen’s GroupChat became the most influential pattern in multi-agent AI:

python

from autogen import GroupChat, GroupChatManager researcher = AssistantAgent(name="Researcher", system_message="Find latest information...") critic = AssistantAgent(name="Critic", system_message="Be skeptical...") writer = AssistantAgent(name="Writer", system_message="Write in engaging style...") groupchat = GroupChat(agents=[researcher, critic, writer], max_round=12) manager = GroupChatManager(groupchat=groupchat) user_proxy.initiate_chat(manager, message="Write about quantum computing...")

In 2025–2026, real-world projects commonly use 5–12 agents: Planner → Researcher → Coder → Tester → Reviewer → Documenter → Human Approver .

AutoGen Performance Profile

The Transition to Microsoft Agent Framework (MAF)

In late 2025, Microsoft announced that AutoGen would merge with Semantic Kernel to form the Microsoft Agent Framework (MAF) . As one analysis explains, “AutoGen brought conversational multi-agent orchestration, emergent team behaviors, and research-oriented flexibility. Semantic Kernel contributed enterprise fundamentals—type safety, middleware, observability, plugins/connectors, and production stability” .

MAF provides:

For new projects in 2026, Microsoft recommends starting with MAF. However, classic AutoGen v0.4 code remains widely used and functional for prototyping .

Part 4: Framework Deep-Dive – CrewAI

Overview and Design Philosophy

CrewAI takes a fundamentally different approach from LangChain and AutoGen. Instead of focusing on low-level orchestration primitives, CrewAI emphasizes role-based collaboration—mirroring how human teams work together. With over 43,000 GitHub stars, it has become the go-to choice for teams prioritizing clarity and rapid prototyping .

The core mental model is simple: define agents with specific roles, goals, and tools, then coordinate them through structured task execution.

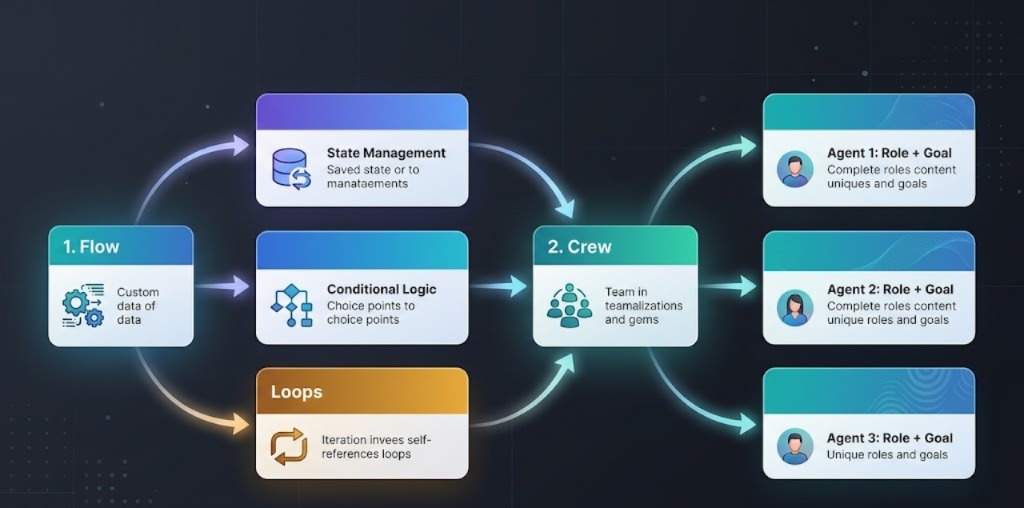

Dual-Layer Architecture: Flows and Crews

CrewAI’s architecture separates two concerns :

| Layer | Purpose | Characteristics |

|---|---|---|

| Flows | Deterministic process control | Logic, state management, loops, conditional paths |

| Crews | Agent collaboration | Role-based tasks, tool access, reasoning |

This separation enables developers to build systems that are both intelligent and reliable. As CrewAI’s documentation explains, “By separating predictable process control (the Flow) from the reasoning tasks handled by agents (the Crew) and any ad hoc LLM calls, developers can build systems that are both intelligent and reliable” .

State Management and Memory

CrewAI provides sophisticated state management for long-running agents:

Performance Characteristics

Based on benchmark tests, CrewAI exhibits unique performance trade-offs:

The CrewAI + NVIDIA NemoClaw Integration

In early 2026, CrewAI announced integration with NVIDIA’s NemoClaw stack, creating a powerful combination for secure enterprise deployment :

| Component | Role |

|---|---|

| CrewAI | High-level orchestration, agent roles, workflows |

| NVIDIA NemoClaw | Secure runtime, policy enforcement, privacy controls |

| NVIDIA OpenShell Runtime | Sandboxing, live policy updates, audit trails |

A key innovation is infrastructure-level policy enforcement: “Every action is enforced at the infrastructure level, not within the agent’s own code. This means that even if an agent’s internal logic changes or behaves unexpectedly, the runtime will still block any action that violates defined security policies” .

Part 5: Framework Comparison – Side by Side

At-a-Glance Comparison Table

Performance Benchmark Results

A comprehensive benchmark across 2,000 runs (5 tasks, 100 runs each) revealed significant differences :

Key Performance Insights

Task 2: Comparative Revenue Analysis (State Management)

- LangChain: “Completes the task in 5-6 steps without any detours. Since its state management is very simple, the overhead is nearly zero” .

- LangGraph: “The most stable framework thanks to its graph-based architecture. State is carried very cleanly throughout the run” .

- AutoGen: “Matches LangGraph nearly exactly in both token use and latency. When it encounters an error, it immediately updates its reasoning” .

- CrewAI: “Consumed nearly twice the tokens and took over three times as long. The multi-step verification process offers thorough but resource-intensive approach” .

Task 4: Error Resilience

- LangGraph & AutoGen: “Found alternative solutions autonomously. When the tool returned a rate limit warning, they decided to abandon the failing tool entirely and find an alternative path” .

- CrewAI: “Showed the lowest token usage but highest latency. When it received the 10-second wait warning, it spent more time in the ‘strategy planning’ phase” .

- LangChain: “Requires configuration for error resilience. Once properly configured, it reached the correct result using the same alternative path approach as LangGraph” .

Part 6: Use Cases and Selection Guide

When to Choose LangChain/LangGraph

Example Use Cases:

- Capital One: Governance-focused agent deployments

- Coinbase: Automated regulated workflows

- Remote: Code execution agents for payroll data

When to Choose Microsoft Agent Framework (Formerly AutoGen)

Example Use Cases:

- Academic research: 94% task completion in multi-agent studies

- Complex reasoning: Coding + reviewing + execution teams

- Customer support: Tier-1 + escalation agents

When to Choose CrewAI

Example Use Cases:

- Shopify prototypes

- Research agents: AI-Q blueprint with Orchestrator, Planner, Researcher roles

- Continuous workflows: Self-evolving agents with safety controls

Part 7: Implementation Examples

LangChain – Basic Agent with Tools

python

from langchain.agents import create_react_agent

from langchain.tools import tool

from langchain_openai import ChatOpenAI

@tool

def search(query: str) -> str:

"""Search for information online."""

return f"Results for: {query}"

tools = [search]

model = ChatOpenAI(model="gpt-4o")

agent = create_react_agent(model, tools, prompt)

result = agent.invoke({"input": "Find information about quantum computing"})

AutoGen (Classic) – Two-Agent Support System

python

from autogen import AssistantAgent, UserProxyAgent

support = AssistantAgent(

name="SupportAgent",

system_message="Answer concisely. If complex, emit [ESCALATE] + reason."

)

escalation = AssistantAgent(

name="EscalationAgent",

system_message="Produce handoff: 'Escalated to human: <summary>'"

)

# Router logic

def handle_query(query):

response = support.generate_reply(query)

if "[ESCALATE]" in response:

return escalation.generate_reply(f"Handle: {response}")

return response

CrewAI – Research Crew

python

from crewai import Agent, Task, Crew

from crewai_tools import SerperDevTool

researcher = Agent(

role="Researcher",

goal="Find latest information on {topic}",

tools=[SerperDevTool()],

verbose=True

)

writer = Agent(

role="Writer",

goal="Synthesize findings into clear report",

verbose=True

)

research_task = Task(

description="Research {topic} thoroughly",

agent=researcher,

expected_output="Key findings"

)

write_task = Task(

description="Write report based on research",

agent=writer,

expected_output="Final report"

)

crew = Crew(agents=[researcher, writer], tasks=[research_task, write_task])

result = crew.kickoff(inputs={"topic": "AI agents"})

Part 8: MHTECHIN’s Expertise in Agentic Frameworks

At MHTECHIN, we specialize in building enterprise-grade AI agents using the leading orchestration frameworks. Our expertise spans:

- Custom Agent Development: Tailored solutions using LangChain, LangGraph, CrewAI, and Microsoft Agent Framework

- Multi-Agent Orchestration: Complex workflows with 5-12 specialized agents collaborating on tasks

- Enterprise Integration: Secure connections to SAP, Salesforce, ServiceNow, and custom APIs

- Production Deployment: Scalable, observable agent systems with comprehensive monitoring

MHTECHIN’s solutions leverage best practices from frameworks with 126,000+ GitHub stars and proven enterprise adoption. Whether you need rapid prototyping with CrewAI or production-scale governance with LangChain, we deliver reliable, cost-effective agentic systems.

Conclusion

The landscape of agentic AI frameworks has matured significantly in 2026. LangChain remains the production-ready choice for enterprises, with 500+ integrations and robust governance features. Microsoft Agent Framework (formerly AutoGen) provides unparalleled flexibility for multi-agent research and experimentation. CrewAI offers the fastest path to role-based, collaborative agents with clear mental models .

Key Takeaways:

- LangChain/LangGraph leads in production readiness, token efficiency, and enterprise governance

- AutoGen/MAF excels in multi-agent conversation patterns and emergent behaviors

- CrewAI provides the fastest prototyping and most intuitive role-based collaboration

- Performance differences are significant—CrewAI uses 3× more tokens and latency for simple tasks, but matches other frameworks in complex scenarios

- Error resilience varies dramatically—LangGraph and AutoGen automatically find alternative paths; LangChain requires configuration

The choice of framework depends on your specific needs. As the LangChain team wisely noted, “Good frameworks encode best practices into the framework itself, reduce boilerplate code, make it easier to reach a higher level of quality, create standards and readability across large teams, and pave a cleaner path to production” .

Frequently Asked Questions (FAQ)

Q1: What is the best AI agent framework in 2026?

There is no single “best” framework—it depends on your needs. LangChain is best for production enterprises, AutoGen/MAF for research and multi-agent experiments, and CrewAI for rapid prototyping with role-based teams .

Q2: How do LangChain and AutoGen differ?

LangChain focuses on chain-based orchestration with modular components. AutoGen (now Microsoft Agent Framework) specializes in conversational multi-agent systems where agents communicate like team members .

Q3: Is CrewAI production-ready?

Yes. CrewAI powers roughly 2 billion agentic executions and is used by more than 60% of Fortune 500 companies .

Q4: What happened to AutoGen in 2025?

AutoGen merged with Semantic Kernel to form Microsoft Agent Framework (MAF), combining AutoGen’s multi-agent capabilities with Semantic Kernel’s enterprise features .

Q5: Which framework has the lowest cost?

CrewAI has the lowest cost at $0.12-0.15 per query. LangChain averages $0.18, and AutoGen averages $0.35 .

Q6: Which framework is fastest?

LangChain and LangGraph have the lowest latency for simple tasks. LangGraph’s state machine architecture provides exceptional stability for complex workflows .

Q7: Which framework is best for multi-agent systems?

AutoGen (now MAF) pioneered multi-agent collaboration with its GroupChat pattern, and CrewAI excels at role-based multi-agent teams .

Q8: How do I get started with these frameworks?

LangChain offers extensive documentation and LangSmith for observability. AutoGen’s classic v0.4 remains great for learning. CrewAI’s intuitive API lets you build a working crew in under 3 hours .

Leave a Reply