Introduction

When most people think of artificial intelligence, they imagine massive data centers—rows of servers consuming enormous amounts of electricity, processing data from millions of users in the cloud. And for many AI applications, that is exactly how it works. But a quieter revolution is underway: AI is moving from the cloud to the edge.

Edge AI refers to artificial intelligence that runs directly on devices—smartphones, cameras, sensors, cars, industrial equipment—rather than sending data to the cloud for processing. Your phone unlocking with facial recognition? That is Edge AI. Your car warning you when you drift out of your lane? Edge AI. A factory robot inspecting products on an assembly line in real time? Edge AI.

Edge AI is transforming what is possible with artificial intelligence. It enables applications that require privacy, low latency, offline operation, and real-time decision-making—capabilities that cloud-based AI simply cannot provide.

This article explains what Edge AI is, why it matters, how it works, and where it is being deployed in 2026. Whether you are a business leader evaluating AI investments, a product manager designing intelligent devices, or someone building foundational AI literacy, this guide will help you understand the shift from cloud to edge.

For a foundational understanding of how AI systems learn and process information, you may find our guide on Supervised vs Unsupervised vs Reinforcement Learning helpful as a starting point.

Throughout, we will highlight how MHTECHIN helps organizations design and deploy Edge AI solutions that balance performance, privacy, and cost.

Section 1: What Is Edge AI?

1.1 A Simple Definition

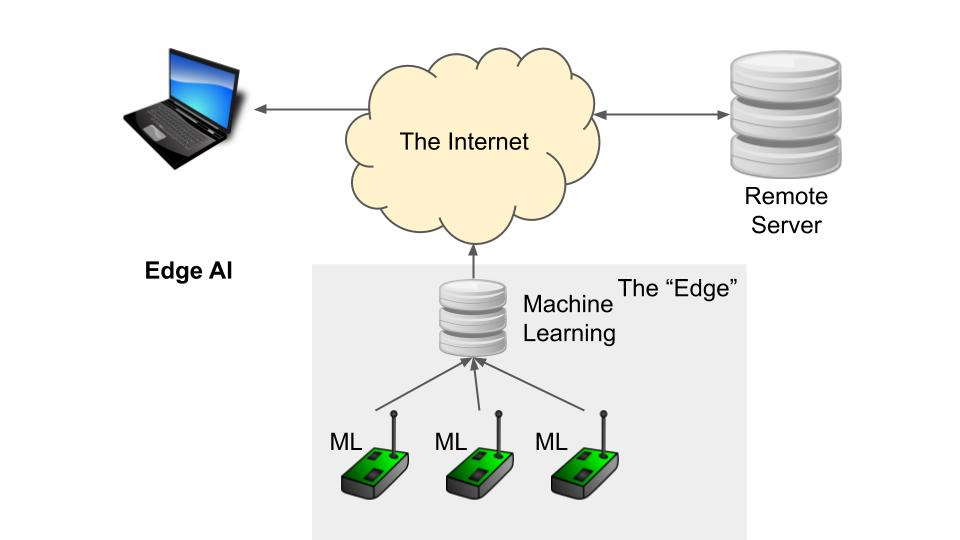

Edge AI refers to artificial intelligence that runs on devices at the “edge” of the network—where data is generated—rather than in centralized cloud data centers. The AI model resides on the device itself, processing data locally without sending it to the cloud.

The “edge” can be any device: a smartphone, a security camera, a wearable watch, a car, a factory sensor, a medical device, or a smart home speaker. Edge AI brings intelligence directly to these devices.

1.2 Edge AI vs. Cloud AI

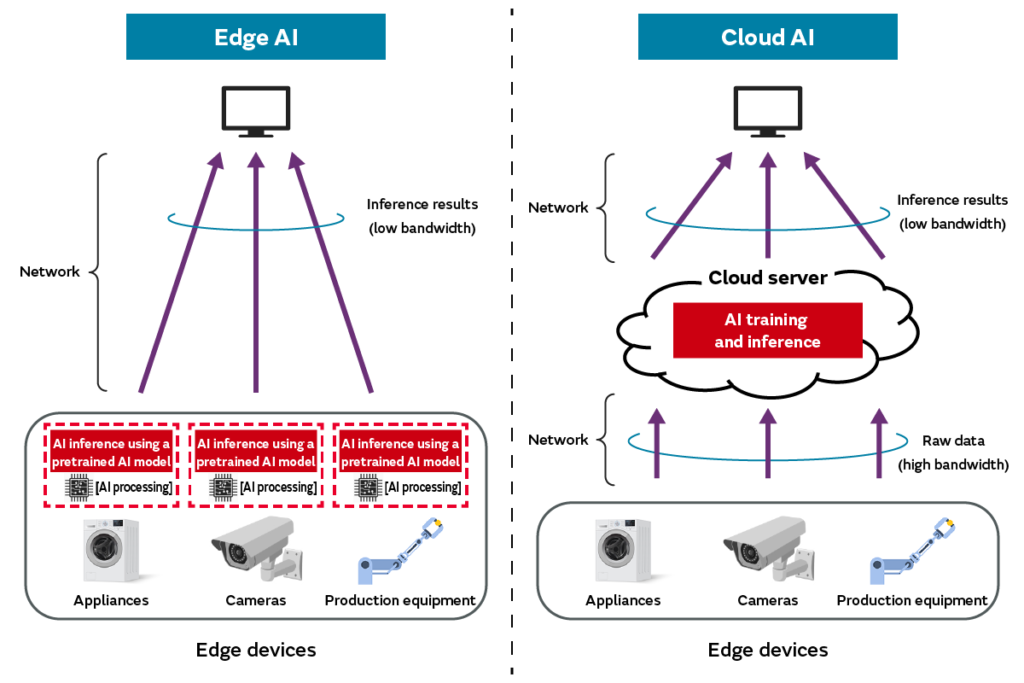

The traditional AI deployment model is cloud AI: data is sent from devices to centralized servers, where powerful models process it, and results are sent back. This works well for many applications but has limitations.

Edge AI flips the model: the AI runs on the device itself. Data stays local. Processing happens in real time. Results are immediate. No network connection is required.

| Dimension | Cloud AI | Edge AI |

|---|---|---|

| Where processing happens | Centralized data centers | On the device itself |

| Latency | Milliseconds to seconds (depends on network) | Milliseconds (immediate) |

| Network dependency | Required | Optional or none |

| Privacy | Data leaves the device | Data stays on the device |

| Cost | Ongoing cloud compute and bandwidth costs | Upfront device cost; no ongoing cloud fees |

| Scalability | Scales with cloud resources | Scales with number of devices |

| Updates | Centralized model updates | Distributed updates; more complex |

1.3 Why Edge AI Matters

Edge AI is not just a technical curiosity. It enables entirely new classes of applications:

Privacy. Sensitive data—medical information, biometrics, personal conversations—never leaves the device. Facial recognition on your phone works without sending your face to the cloud.

Latency. Some decisions cannot wait for a round trip to the cloud. A self-driving car must brake in milliseconds. An industrial robot must stop immediately when a hand enters a danger zone.

Bandwidth. Sending continuous video streams to the cloud is expensive. Edge AI processes video locally, sending only alerts or metadata.

Offline operation. Edge AI works anywhere—no internet connection required. Agricultural sensors in remote fields, medical devices in rural clinics, military applications in disconnected environments.

Cost. Eliminating cloud processing costs can dramatically reduce total cost of ownership for large-scale deployments.

Section 2: How Edge AI Works

2.1 From Cloud Training to Edge Deployment

Edge AI follows a two-phase process:

Training (in the cloud or data center). The AI model is trained on powerful infrastructure using large datasets. This is where the model learns its capabilities.

Deployment (on the edge). The trained model is optimized for the target device—compressed, quantized, and packaged—then deployed to run locally on edge hardware.

The training phase requires significant compute. The deployment phase requires efficiency—the model must run on devices with limited processing power, memory, and battery.

2.2 Model Optimization for Edge Devices

Cloud models are often too large and too slow for edge devices. Optimization techniques shrink models while preserving accuracy:

Quantization. Reducing the precision of model weights from 32-bit floating point to 8-bit integers or lower. This can shrink model size by 75% while maintaining near-original accuracy.

Pruning. Removing neural network connections that contribute little to accuracy. This reduces model size and inference time.

Knowledge distillation. Training a smaller “student” model to mimic a larger “teacher” model. The result is a compact model with comparable performance.

Hardware acceleration. Edge devices increasingly include specialized AI processors—neural processing units (NPUs), tensor processing units (TPUs), or graphics processing units (GPUs)—designed specifically for efficient AI inference.

2.3 Types of Edge Devices

Edge AI runs on a wide spectrum of devices:

| Device Type | Processing Capability | Examples |

|---|---|---|

| Smartphones | High (dedicated NPUs) | Face unlock, camera enhancements, voice assistants |

| Wearables | Low to moderate | Fitness tracking, heart rate analysis, fall detection |

| Cameras | Moderate (edge AI chips) | Security cameras with person detection, retail analytics |

| Automotive | High (dedicated AI accelerators) | Lane keeping, driver monitoring, obstacle detection |

| Industrial sensors | Low to moderate | Predictive maintenance, vibration analysis, quality inspection |

| Medical devices | Moderate to high | Continuous glucose monitors, portable ultrasound AI |

| Smart home devices | Low to moderate | Voice wake word detection, occupancy sensing |

Section 3: Why Edge AI Is Exploding in 2026

3.1 Hardware Advances

The biggest enabler of Edge AI is hardware. In 2026, even modest devices contain dedicated AI processors. Qualcomm, Apple, Google, and others have integrated neural processing units (NPUs) into their mobile chips. These NPUs can run complex AI models while consuming minimal power—often under one watt.

Specialized edge AI chips from companies like NVIDIA (Jetson), Google (Coral), and startups are making it possible to deploy AI on cameras, sensors, and industrial equipment with unprecedented efficiency.

3.2 Model Efficiency

Models themselves are becoming more efficient. Techniques like quantization, pruning, and efficient architectures (MobileNet, EfficientNet, TinyML) allow sophisticated AI to run on devices with limited memory and compute.

Large language models are even being compressed to run on smartphones—something unimaginable just a few years ago.

3.3 Privacy and Regulatory Pressures

Privacy regulations like GDPR, HIPAA, and emerging AI regulations increasingly restrict sending sensitive data to the cloud. Edge AI offers a solution: data never leaves the device, reducing compliance burden and privacy risk.

3.4 Network Constraints

5G and advanced Wi-Fi help, but they do not eliminate latency. For real-time applications—autonomous vehicles, industrial control, surgical robotics—the delay inherent in cloud processing is unacceptable. Edge AI provides the immediacy these applications require.

Section 4: Real-World Edge AI Applications

4.1 Smartphones: The Most Common Edge AI

Every modern smartphone is an Edge AI device. Facial recognition runs entirely on the device—your face never leaves your phone. Camera enhancements—scene detection, portrait mode, night mode—are processed locally. Voice assistants use on-device wake word detection, only sending audio to the cloud after you say “Hey Siri” or “Okay Google.” Live translation features increasingly run on-device, enabling real-time conversation without cloud dependency.

4.2 Automotive: Safety-Critical Edge AI

Self-driving and driver-assist systems are quintessential Edge AI applications. Lane-keeping systems process camera data locally to keep the vehicle centered. Driver monitoring detects drowsiness or distraction and alerts the driver immediately. Obstacle detection identifies pedestrians, vehicles, and obstacles in milliseconds. These decisions cannot wait for cloud round trips—Edge AI is essential for safety.

4.3 Industrial IoT and Manufacturing

Factories are deploying Edge AI for real-time quality inspection. Cameras on assembly lines use computer vision to detect defects—scratches, misalignments, missing components—with superhuman speed. Predictive maintenance sensors analyze vibration and temperature data locally, flagging anomalies before equipment fails. These systems operate reliably even in environments with limited or no network connectivity.

4.4 Healthcare and Medical Devices

Edge AI is transforming medical devices. Continuous glucose monitors analyze sensor data locally, alerting patients to dangerous trends without sending sensitive health data to the cloud. Portable ultrasound devices use AI to guide users to the correct imaging plane and highlight potential abnormalities. Wearable ECG monitors detect arrhythmias in real time, providing immediate alerts.

4.5 Retail and Smart Spaces

Retailers use Edge AI for inventory management. Cameras on shelves detect out-of-stock items and alert staff. Customer analytics—traffic patterns, dwell times, demographic estimates—run on edge cameras, sending only aggregated statistics to the cloud. This preserves customer privacy while providing valuable insights.

4.6 Agriculture

Farmers deploy Edge AI on drones and field sensors. Drone-based crop health analysis identifies disease or nutrient deficiencies in real time, enabling targeted interventions. Livestock monitoring systems track animal health and behavior, alerting farmers to issues without requiring constant human observation.

4.7 Security and Surveillance

Edge AI cameras can detect faces, vehicles, and unusual behavior without streaming video to the cloud. A camera might send an alert only when a person enters a restricted area, dramatically reducing bandwidth and cloud storage costs. Privacy is also enhanced—video is processed locally and never stored or transmitted unnecessarily.

Section 5: Benefits of Edge AI

5.1 Privacy and Security

Data stays on the device. For sensitive applications—healthcare, biometrics, personal conversations—this is a fundamental advantage. Edge AI eliminates the risk of data breaches in transit or in the cloud. It also simplifies compliance with regulations like GDPR and HIPAA.

5.2 Low Latency

Edge AI responds in milliseconds. For autonomous vehicles, industrial control, and real-time safety systems, this is non-negotiable. Cloud AI introduces variable latency—network congestion, server load, geographic distance—that can be fatal in safety-critical applications.

5.3 Bandwidth and Cost Efficiency

Transmitting data to the cloud consumes bandwidth and incurs costs. Edge AI processes data locally, sending only results—which are often tiny compared to raw data. A security camera might send an alert (“person detected at 3:15 PM”) rather than streaming video continuously. Over thousands of devices, this savings is enormous.

5.4 Offline Operation

Edge AI works anywhere. Remote agricultural sensors, offshore oil rigs, rural clinics, and military applications cannot rely on consistent internet connectivity. Edge AI enables intelligent systems to function in disconnected environments.

5.5 Scalability

Cloud AI costs scale with usage—more devices, more data, more compute. Edge AI costs scale with devices—an upfront hardware cost but no ongoing cloud fees. For large-scale deployments with thousands or millions of devices, Edge AI can be dramatically more cost-effective.

Section 6: Challenges of Edge AI

6.1 Hardware Constraints

Edge devices have limited processing power, memory, and battery life. Running sophisticated AI models within these constraints requires careful optimization. Not every AI application can be compressed to run on edge hardware—some tasks still require cloud-scale compute.

6.2 Model Management

Updating AI models on thousands or millions of edge devices is complex. Unlike cloud AI, where a single model update serves all users, edge AI requires distributed updates. Ensuring all devices run the correct version, handling failed updates, and managing version fragmentation are significant operational challenges.

6.3 Security and Tampering

Edge devices are physically accessible. A malicious actor could potentially extract models, reverse-engineer them, or tamper with device behavior. Securing edge AI requires hardware-level security measures—secure enclaves, encrypted storage, and tamper detection.

6.4 Testing Complexity

Testing edge AI is more complex than cloud AI. Devices operate in diverse environments—different lighting, network conditions, hardware variations. Ensuring consistent performance across all conditions requires extensive field testing.

6.5 Development Complexity

Developing for edge AI requires expertise in both AI and embedded systems. Teams must understand model optimization, hardware constraints, and deployment pipelines—a combination of skills that is still relatively rare.

Section 7: How MHTECHIN Helps with Edge AI

Edge AI requires specialized expertise—in model optimization, hardware selection, and distributed deployment. MHTECHIN helps organizations design and deploy edge AI solutions that balance performance, privacy, and cost.

7.1 For Strategy and Architecture

MHTECHIN helps organizations determine whether edge AI is the right approach. For applications requiring privacy, low latency, offline operation, or cost efficiency at scale, edge AI may be ideal. For applications where cloud-scale compute is needed or where device constraints are prohibitive, cloud AI may be appropriate. Often, the optimal solution is hybrid—edge for real-time decisions, cloud for training and complex analytics.

7.2 For Model Optimization

MHTECHIN optimizes AI models for edge deployment—quantization, pruning, knowledge distillation, and hardware-specific acceleration. The goal is to preserve accuracy while ensuring models run efficiently on target devices.

7.3 For Hardware Selection

MHTECHIN helps organizations select the right edge hardware for their use case. Options range from smartphones and wearables to specialized edge AI cameras, industrial gateways, and automotive-grade systems. The choice depends on processing requirements, power constraints, environmental conditions, and cost.

7.4 For Deployment and Management

MHTECHIN designs deployment pipelines for edge AI—over-the-air updates, device management, monitoring, and security. The goal is to ensure that edge devices remain current, secure, and performant over their lifetime.

7.5 The MHTECHIN Approach

MHTECHIN’s edge AI practice combines AI expertise with embedded systems engineering. The team understands both the capabilities of modern edge hardware and the constraints of real-world deployment. For organizations building intelligent devices, MHTECHIN provides the expertise to deliver edge AI that works reliably at scale.

Section 8: Frequently Asked Questions

8.1 Q: What is Edge AI in simple terms?

A: Edge AI is artificial intelligence that runs directly on devices—smartphones, cameras, cars, sensors—rather than sending data to the cloud for processing. It enables privacy, low latency, offline operation, and reduced bandwidth costs.

8.2 Q: How is Edge AI different from cloud AI?

A: Cloud AI processes data in centralized data centers; Edge AI processes data on the device itself. Edge AI offers lower latency, better privacy, offline capability, and lower ongoing costs. Cloud AI offers greater compute power and easier model updates.

8.3 Q: What are examples of Edge AI I use every day?

A: Face unlock on your phone, camera scene optimization, voice assistant wake word detection, lane-keeping assistance in your car, and fitness tracking on your smartwatch are all examples of Edge AI.

8.4 Q: Can large language models run on edge devices?

A: Increasingly, yes. Through techniques like quantization, pruning, and efficient architectures, smaller versions of LLMs can run on high-end smartphones and dedicated edge AI hardware. Full-scale models still require cloud compute, but compressed versions are becoming capable for many applications.

8.5 Q: Is Edge AI more private than cloud AI?

A: Yes. With Edge AI, data never leaves the device. This eliminates risks associated with data transmission and cloud storage. For sensitive applications—healthcare, biometrics, personal data—Edge AI offers significant privacy advantages.

8.6 Q: What hardware does Edge AI require?

A: Many modern devices include dedicated AI processors—neural processing units (NPUs) or tensor processing units (TPUs)—designed for efficient AI inference. For custom deployments, options include NVIDIA Jetson, Google Coral, and specialized edge AI chips from various vendors.

8.7 Q: How do you update Edge AI models?

A: Edge AI models are updated through over-the-air (OTA) updates. Models are optimized, packaged, and pushed to devices. Update strategies must handle devices with limited connectivity, ensure update integrity, and manage version fragmentation across large device fleets.

8.8 Q: Is Edge AI cheaper than cloud AI?

A: For large-scale deployments, yes. Edge AI has upfront hardware costs but eliminates ongoing cloud compute and bandwidth fees. For small-scale or intermittent use, cloud AI may be more cost-effective. A hybrid approach is often optimal.

8.9 Q: Can Edge AI work without internet?

A: Yes. Edge AI runs entirely on the device, requiring no internet connection for inference. This is essential for remote applications, mobile devices, and safety-critical systems that must operate reliably regardless of connectivity.

8.10 Q: How does MHTECHIN help with Edge AI?

A: MHTECHIN helps organizations design, optimize, and deploy Edge AI solutions. We provide strategy, model optimization, hardware selection, deployment pipelines, and ongoing management—ensuring edge AI delivers performance, privacy, and reliability at scale.

Section 9: Conclusion—The Shift to the Edge

Edge AI represents a fundamental shift in how artificial intelligence is deployed. For years, the dominant model was cloud-centric: send data to powerful servers, process it, return results. That model works—but it has limitations that Edge AI addresses.

Edge AI enables applications that require privacy, low latency, offline operation, and cost efficiency at scale. It powers facial recognition on your phone, lane-keeping in your car, defect detection in factories, and health monitoring on your wrist. It is not replacing cloud AI; it is complementing it, handling the workloads that cloud AI cannot.

In 2026, the trend is clear: AI is moving to the edge. Hardware is becoming more powerful and efficient. Models are becoming smaller without sacrificing accuracy. And organizations are discovering that for many applications, the best place for AI is not in a distant data center—it is on the device itself.

For organizations building intelligent systems, the question is no longer “cloud or edge?” but “how do we combine them effectively?” The future is hybrid—edge for real-time, private, low-latency decisions; cloud for training, complex analysis, and coordination at scale.

Ready to bring AI to the edge? Explore MHTECHIN’s Edge AI solutions at www.mhtechin.com. From strategy through deployment, our team helps you design and deploy intelligent systems that run anywhere.

This guide is brought to you by MHTECHIN—helping organizations deploy AI at the edge, from smartphones to industrial systems. For personalized guidance on Edge AI strategy or implementation, reach out to the MHTECHIN team today.

Leave a Reply