Introduction

You ask an AI assistant a straightforward question: “Who won the Nobel Prize in Physics in 1956?” It confidently responds with a name, a discovery, and a detailed biography. The only problem? The answer is completely wrong. The person never existed. The discovery never happened.

This is an AI hallucination—when an artificial intelligence system generates information that is confident, plausible-sounding, and entirely false. It is one of the most significant challenges facing AI deployment today. Hallucinations undermine trust, create risk, and limit the use of AI in high-stakes applications like healthcare, finance, and law.

In 2026, as AI systems become more capable and more embedded in critical workflows, understanding hallucinations—why they happen, how to detect them, and how to prevent them—is essential for anyone using or deploying AI.

This article explains what AI hallucinations are, why they occur, real-world examples of their impact, and practical strategies to prevent them. Whether you are a business leader deploying AI, a professional using AI tools, or someone building foundational AI literacy, this guide will help you navigate this critical challenge.

For a foundational understanding of how generative AI works, you may find our guide on Generative AI vs Predictive AI: Which One Do You Need? helpful as a starting point.

Throughout, we will highlight how MHTECHIN helps organizations deploy AI responsibly—with strategies to detect, mitigate, and prevent hallucinations.

Section 1: What Are AI Hallucinations?

1.1 A Simple Definition

An AI hallucination occurs when an AI system generates output that is factually incorrect, nonsensical, or ungrounded in its training data—but presents it with confidence and authority.

The term “hallucination” comes from the human experience: seeing or hearing something that is not actually there. Similarly, an AI hallucination is a fabrication presented as truth.

Hallucinations are not the same as intentional deception. The AI is not lying—it has no concept of truth or falsehood. It is generating text based on patterns it has learned, and sometimes those patterns produce outputs that do not correspond to reality.

1.2 Hallucinations vs. Errors vs. Misinformation

| Term | Definition | Example |

|---|---|---|

| Hallucination | AI generates fabricated information confidently | AI claims a historical event happened in 1955 when it happened in 1960—but the event itself is real |

| Factual Error | AI retrieves or infers incorrect information | AI misstates a well-known fact due to gaps in training data |

| Misinformation | AI repeats false information from its training data | AI repeats a conspiracy theory that appears in its training corpus |

| Adversarial Output | AI is deliberately manipulated to produce harmful content | Prompt injection tricks AI into ignoring safety guidelines |

All are problematic, but hallucinations are particularly challenging because the output is often original fabrication, not just a repetition of bad data.

1.3 Why Hallucinations Matter

Hallucinations are not just academic curiosities. They have real-world consequences:

- Erosion of trust. If an AI assistant gives wrong answers, users stop relying on it.

- Operational risk. In business applications, hallucinations can lead to wrong decisions, incorrect documentation, or misdirected resources.

- Legal and compliance risk. In regulated industries, AI-generated falsehoods can violate compliance requirements or create liability.

- Safety risk. In healthcare, a hallucinated medical fact could have serious consequences if not caught.

- Reputational damage. Public-facing AI tools that hallucinate undermine organizational credibility.

Section 2: Why Do AI Hallucinations Happen?

2.1 The Fundamental Cause: AI Does Not Understand

The root cause of hallucinations is simple: AI systems do not understand what they are saying.

Large language models like ChatGPT are trained to predict the next word in a sequence. They learn patterns—grammar, facts, reasoning structures—from trillions of words. But they have no internal representation of truth, no grounding in reality, and no mechanism to verify facts.

When you ask a question, the model generates a response by predicting the most likely sequence of words based on its training. It is not retrieving facts from a database. It is not “knowing” anything. It is performing statistical prediction.

Sometimes, the most statistically likely completion is a factually accurate statement. Sometimes, it is a plausible-sounding fabrication. The model has no way to tell the difference.

2.2 Specific Causes of Hallucinations

| Cause | Explanation |

|---|---|

| Training data gaps | The model lacks information about a topic, so it fills gaps with plausible-sounding text |

| Ambiguous prompts | Vague or poorly framed prompts lead the model to guess intent and produce wrong answers |

| Overconfidence in patterns | The model has learned patterns that sometimes lead to wrong but statistically common completions |

| No fact-checking mechanism | The model has no built-in ability to verify outputs against authoritative sources |

| Temperature settings | Higher “temperature” (randomness) settings increase creativity but also increase hallucination risk |

| Out-of-distribution inputs | The model is asked about something far from its training data and generalizes poorly |

2.3 The Confidence Problem

One of the most challenging aspects of hallucinations is that AI models often express them with high confidence. A model does not know when it is wrong. It does not have an internal certainty meter. It simply generates text—whether correct or not—with the same fluency and authority.

This means you cannot rely on the AI’s tone to detect errors. A hallucination sounds just as confident as a correct answer.

2.4 Hallucinations Are Not Bugs—They Are Features

This is a crucial insight: hallucinations are not a bug that can be fixed with a simple patch. They are inherent to how large language models work.

Language models are designed to generate plausible text. Sometimes, the plausible text does not match reality. Unless the model is explicitly constrained (with retrieval mechanisms, grounding, or fact-checking), hallucinations will occur.

Leading AI companies acknowledge this. As OpenAI, Google, and Anthropic all state in their documentation, language models are not truth engines—they are text generators. Responsible use requires understanding this limitation.

Section 3: Real-World Examples of AI Hallucinations

3.1 Legal: Fake Citations in Legal Briefs

In a well-documented case, a lawyer used ChatGPT to prepare a legal brief. The AI generated citations to court cases that did not exist—complete with case numbers, judges, and plausible-sounding details. The lawyer submitted the brief without verifying the citations. The opposing counsel could not find the cases. The lawyer faced sanctions.

This case illustrates the risk: hallucinations in high-stakes domains can have serious professional and legal consequences.

3.2 Healthcare: Fictional Medical Literature

A researcher asked an AI to summarize recent studies on a rare condition. The AI generated summaries of several papers—authors, journals, publication dates, findings. None of the papers existed. The researcher, who did not verify, nearly included fabricated sources in a grant application.

In healthcare, hallucinations could lead to misdiagnosis, incorrect treatment recommendations, or flawed research.

3.3 Journalism: Fabricated Quotes and Events

Newsrooms experimenting with AI-assisted writing have found that AI models sometimes invent quotes from real people, fabricate details about events, or create entirely fictional scenarios. These hallucinations, if published, erode trust and create liability.

3.4 Customer Service: Wrong Policies and Procedures

A company deployed an AI chatbot to answer customer questions about return policies. The AI, trained on a mix of current and outdated policy documents, sometimes generated answers that combined policies incorrectly—offering refund terms that did not exist. Customers acted on these answers, leading to confusion and frustration.

3.5 Software Development: Vulnerable or Non-Functional Code

AI code assistants sometimes generate code that looks correct but contains security vulnerabilities or does not function as described. A developer who deploys unverified AI-generated code could introduce bugs or security flaws.

3.6 Finance: Fictional Company Data

A financial analyst asked an AI to summarize a company’s quarterly earnings. The AI generated a summary with figures that were plausible but incorrect—mixing data from different quarters, inventing numbers, or misrepresenting performance.

Section 4: How to Detect Hallucinations

4.1 The First Line of Defense: Human Verification

The most effective way to detect hallucinations is human verification. AI outputs should be treated as drafts, not final answers. Humans who understand the domain can spot inconsistencies, implausible claims, and factual errors.

For high-stakes applications, AI-generated content should be reviewed by a subject-matter expert before use or publication.

4.2 Red Flags That May Indicate Hallucinations

| Red Flag | What to Look For |

|---|---|

| Overly specific details | Unusually precise dates, names, or numbers that seem too convenient |

| No sources | Claims without citations or references that can be verified |

| Plausible but unusual | Information that sounds correct but you have never heard before |

| Internal inconsistencies | The response contradicts itself or known facts |

| Confidence without evidence | Very assertive tone without supporting detail |

4.3 Cross-Verification Strategies

- Check against authoritative sources. If the AI provides a fact, verify it against a trusted source (official website, verified database, peer-reviewed publication).

- Use multiple AI tools. Ask the same question to different models. If they disagree, investigate further.

- Ask for citations. Some AI tools can provide sources. Always verify those sources actually exist and support the claim.

- Use retrieval-augmented generation (RAG). Systems that retrieve information from trusted sources before generating reduce hallucinations.

4.4 Prompting to Reduce Hallucinations

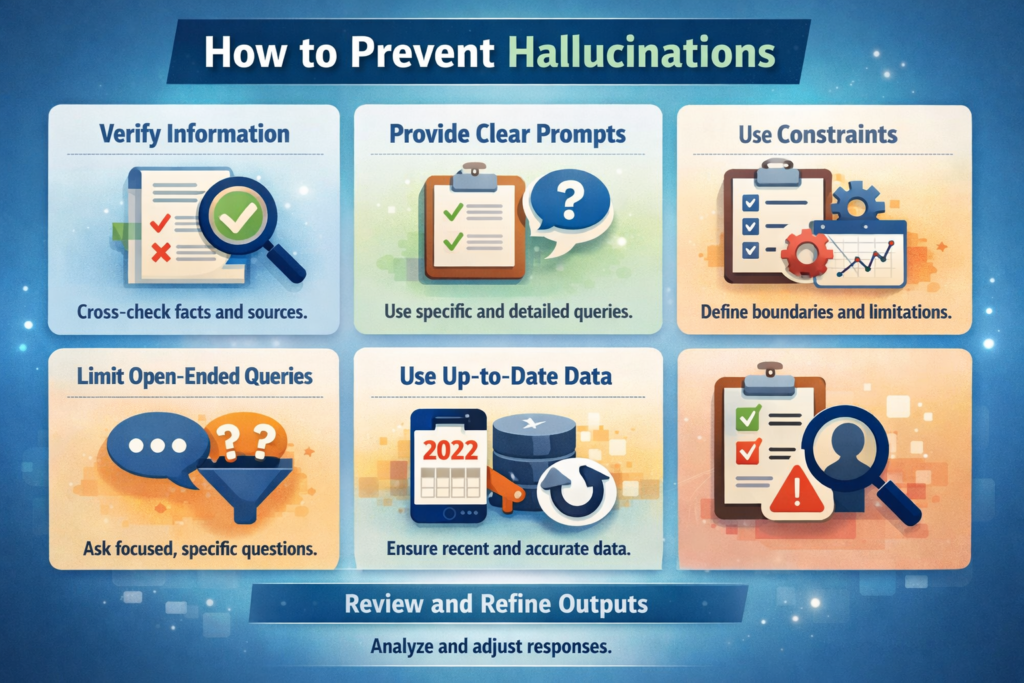

You can reduce hallucination risk through careful prompting:

- Ask for confidence levels. “If you are uncertain, say so.”

- Request sources. “Provide sources for any factual claims.”

- Specify constraints. “Only use information from [trusted source].”

- Ask for step-by-step reasoning. Chain-of-thought prompting can surface flawed assumptions.

Section 5: How to Prevent Hallucinations in AI Systems

5.1 Technical Approaches

| Approach | How It Works | Effectiveness |

|---|---|---|

| Retrieval-Augmented Generation (RAG) | The AI retrieves relevant information from a trusted knowledge base before generating; generates based on retrieved content, not just training data | High—significantly reduces hallucinations by grounding responses |

| Fine-Tuning on Verified Data | The model is further trained on domain-specific, verified data to improve accuracy in that domain | Moderate—improves accuracy but does not eliminate hallucinations |

| Constitutional AI | Models are trained with principles and rules that discourage fabrication | Moderate—reduces harmful outputs but not all hallucinations |

| Chain-of-Thought Verification | The AI is prompted to show its reasoning step-by-step, making errors more visible | Moderate—helps detect errors but does not prevent them |

| Source Citation | The AI is required to cite sources for claims; users can verify | High—enables verification, but sources must be checked |

| Lower Temperature Settings | Reducing randomness makes outputs more predictable and less creative | Moderate—reduces hallucinations but also reduces creativity |

5.2 Retrieval-Augmented Generation (RAG) Explained

RAG is one of the most effective techniques for reducing hallucinations. Instead of relying solely on the model’s internal knowledge, the system:

- Takes the user’s query

- Searches a trusted knowledge base (documents, databases, verified sources)

- Retrieves relevant content

- Provides that content to the language model as context

- The model generates a response based on the retrieved content

Because the response is grounded in retrieved, verified content, hallucinations are significantly reduced. RAG is the standard approach for enterprise applications where accuracy matters.

5.3 Human-in-the-Loop Systems

For critical applications, the most reliable approach is human-in-the-loop:

- AI generates draft outputs

- Humans review, verify, and refine

- The system learns from human corrections over time

This combines the speed of AI with the judgment and domain expertise of humans.

5.4 Prompt Engineering Best Practices

When using AI tools directly, careful prompting reduces hallucination risk:

| Do | Don’t |

|---|---|

| Ask for sources and citations | Accept unsupported claims |

| Specify “if uncertain, say so” | Assume the AI knows when it is uncertain |

| Provide context and constraints | Ask vague, open-ended questions |

| Request step-by-step reasoning | Ask for final answers without reasoning |

| Verify critical information | Trust AI outputs without verification |

Section 6: How MHTECHIN Helps Organizations Address Hallucinations

Deploying AI responsibly means anticipating and mitigating hallucinations. MHTECHIN helps organizations build AI systems that are accurate, trustworthy, and safe.

6.1 For AI Strategy and Governance

MHTECHIN helps organizations:

- Develop AI governance frameworks. Policies for when and how AI can be used, with clear guidelines for verification.

- Assess risk tolerance. Different applications have different tolerance for hallucinations. A creative brainstorming tool can tolerate more error than a medical documentation system.

- Establish testing protocols. How do you test for hallucinations before deployment?

6.2 For Technical Implementation

MHTECHIN builds systems that minimize hallucination risk:

- Retrieval-Augmented Generation (RAG). Ground AI responses in your trusted knowledge base—documents, databases, verified content.

- Fine-tuning. Adapt models to your domain with high-quality, verified data.

- Human-in-the-loop workflows. Design systems where AI outputs are reviewed by experts, with feedback loops for continuous improvement.

- Monitoring and logging. Track AI outputs to identify patterns of hallucination and improve over time.

6.3 For Training and Literacy

MHTECHIN trains teams to work effectively with AI:

- Understanding limitations. What are hallucinations? Why do they happen?

- Verification skills. How to spot potential hallucinations. How to verify facts.

- Prompt engineering. How to craft prompts that reduce hallucination risk.

- Workflow design. How to integrate AI into processes with appropriate oversight.

6.4 The MHTECHIN Approach

MHTECHIN’s approach to hallucinations is grounded in reality: they cannot be eliminated entirely, but they can be managed. The team:

- Understands your use case. Different applications have different requirements.

- Selects the right architecture. RAG, fine-tuning, or hybrid approaches.

- Implements verification mechanisms. Source citation, human review, cross-checking.

- Monitors and improves. Continuous learning from feedback.

For organizations deploying AI, MHTECHIN provides the expertise to build systems that are both powerful and trustworthy.

Section 7: Frequently Asked Questions About AI Hallucinations

7.1 Q: What are AI hallucinations in simple terms?

A: AI hallucinations occur when an AI system generates information that is confident, plausible-sounding, and completely false. The AI is not lying—it does not know it is wrong. It is simply generating text based on patterns, and sometimes those patterns produce fabrications.

7.2 Q: Why do AI hallucinations happen?

A: AI hallucinates because it does not understand truth. Language models are trained to predict the next word, not to verify facts. They generate plausible text based on patterns, but they have no internal mechanism to check accuracy. When they lack information, they fill gaps with statistically likely—but often wrong—completions.

7.3 Q: Can AI hallucinations be eliminated completely?

A: No. Hallucinations are inherent to how large language models work. They can be reduced—significantly—through techniques like retrieval-augmented generation (RAG), fine-tuning, and human review. But they cannot be eliminated entirely. Responsible AI use requires understanding this limitation.

7.4 Q: How can I tell if an AI is hallucinating?

A: Look for overly specific details without sources, claims that seem plausible but you have never heard before, internal inconsistencies, and high confidence without evidence. Always verify critical information against authoritative sources.

7.5 Q: Are hallucinations the same as bias?

A: No. Bias occurs when AI reflects prejudices or stereotypes present in its training data. Hallucinations are fabrications that may or may not be biased. Both are problems, but they have different causes and require different mitigation strategies.

7.6 Q: Do all AI models hallucinate?

A: Large language models (like ChatGPT, Gemini, Claude) are most prone to hallucinations because they are designed to generate text freely. Predictive AI models (like fraud detection or churn prediction) do not hallucinate in the same way—they produce scores or probabilities, not free-form text. However, they can still make errors.

7.7 Q: What is retrieval-augmented generation (RAG)?

A: RAG is a technique that reduces hallucinations by grounding AI responses in a trusted knowledge base. The system retrieves relevant information from verified sources before generating, and the model generates based on that retrieved content. This significantly improves accuracy.

7.8 Q: Can prompt engineering reduce hallucinations?

A: Yes. Careful prompting—asking for sources, requesting step-by-step reasoning, specifying “if uncertain, say so”—can reduce hallucination risk. However, prompt engineering alone does not eliminate hallucinations. It is one tool among many.

7.9 Q: Are hallucinations a safety risk?

A: In high-stakes applications—healthcare, law, finance, safety-critical systems—hallucinations can pose serious risks. A hallucinated medical fact, legal citation, or safety instruction could have real-world consequences. This is why human verification and careful system design are essential.

7.10 Q: How should my organization manage hallucination risk?

A: Start with a clear understanding of your risk tolerance. For low-stakes applications (brainstorming, draft content), hallucinations may be acceptable with verification. For high-stakes applications, use retrieval-augmented generation, human-in-the-loop review, and rigorous testing. MHTECHIN can help you assess risk and implement appropriate safeguards.

Section 8: Conclusion—Living with Hallucinations

AI hallucinations are not a bug to be fixed. They are a feature of how large language models work. These systems are designed to generate plausible text—not to be truth engines. They will continue to produce confident falsehoods as long as they are asked to generate without grounding in verified sources.

The responsible path forward is not to wait for hallucination-free AI. It is to understand the limitations, implement safeguards, and design workflows that combine AI speed with human judgment.

For individuals using AI tools: treat outputs as drafts. Verify critical information. Learn to spot potential hallucinations.

For organizations deploying AI: build systems grounded in trusted knowledge. Use retrieval-augmented generation. Implement human review for critical outputs. Monitor and improve continuously.

AI is a powerful tool—but it is not infallible. Understanding its limitations, including hallucinations, is essential for using it effectively and responsibly.

Ready to deploy AI that you can trust? Explore MHTECHIN’s AI advisory and implementation services at www.mhtechin.com. From RAG architectures to governance frameworks, our team helps you build AI systems that are both powerful and reliable.

This guide is brought to you by MHTECHIN—helping organizations deploy AI responsibly, with strategies to detect, mitigate, and prevent hallucinations. For personalized guidance on trustworthy AI implementation, reach out to the MHTECHIN team today.

Leave a Reply